Your Dose of Reg.exe, Week {6}

Discussions covered AI bubble concerns, semiconductor export restrictions, liquid cooling for data centers, and new model releases including GPT-5 impressions and Mistral Medium 3.1.

Reg.exe is a global closed community of 260+ engineers, founders, and researchers interested in AI innovation, from San Francisco to Tokyo. Each week, we share the highlights of our discussions in a newsletter. If you’d like to join, write to join@welovesota.com

Knowledge

🫧 Sam Altman admits AI is in a bubble - 📰 The Verge reports that OpenAI's CEO acknowledged investors are "overexcited" about AI, raising questions about potential impacts on future investments. (🙏 Gabriel Olympie @ Reg.exe)

⚙️ AI Engineer talks repository - 🎞️ AI Engineer YouTube channel highlighted as containing valuable workshops, events, and training content for AI engineers. (🙏 Robert Hommes @ Moyai.ai)

🎙️ Oxford's Michael Wooldridge on AI reality - An interesting 1h30 🎞️ interview discussing where AI is actually headed versus the hype, providing a grounded perspective on the technology's future. (🙏 Kevin Kuipers @ Galion.exe)

💰 Perplexity's Chrome acquisition bid - Perplexity reportedly making an unexpected bid to acquire Chrome browser, potentially requiring significant fundraising. (🙏 Gabriel Olympie @ Reg.exe)

🧠 ARC-AGI-3 Preview - 🎞️ New interactive reasoning benchmarks showcasing algorithmic learning approaches for improved reasoning capabilities. (🙏 Louis Manhes @ Genario)

🤔 Chain-of-thought reasoning questioned - 🔬 Research paper explores whether LLM reasoning represents pattern replication from training data or genuine logical inference, challenging fundamental assumptions about how large language models process information. (🙏 Victoria Latynina @ Sanofi)

Language Models

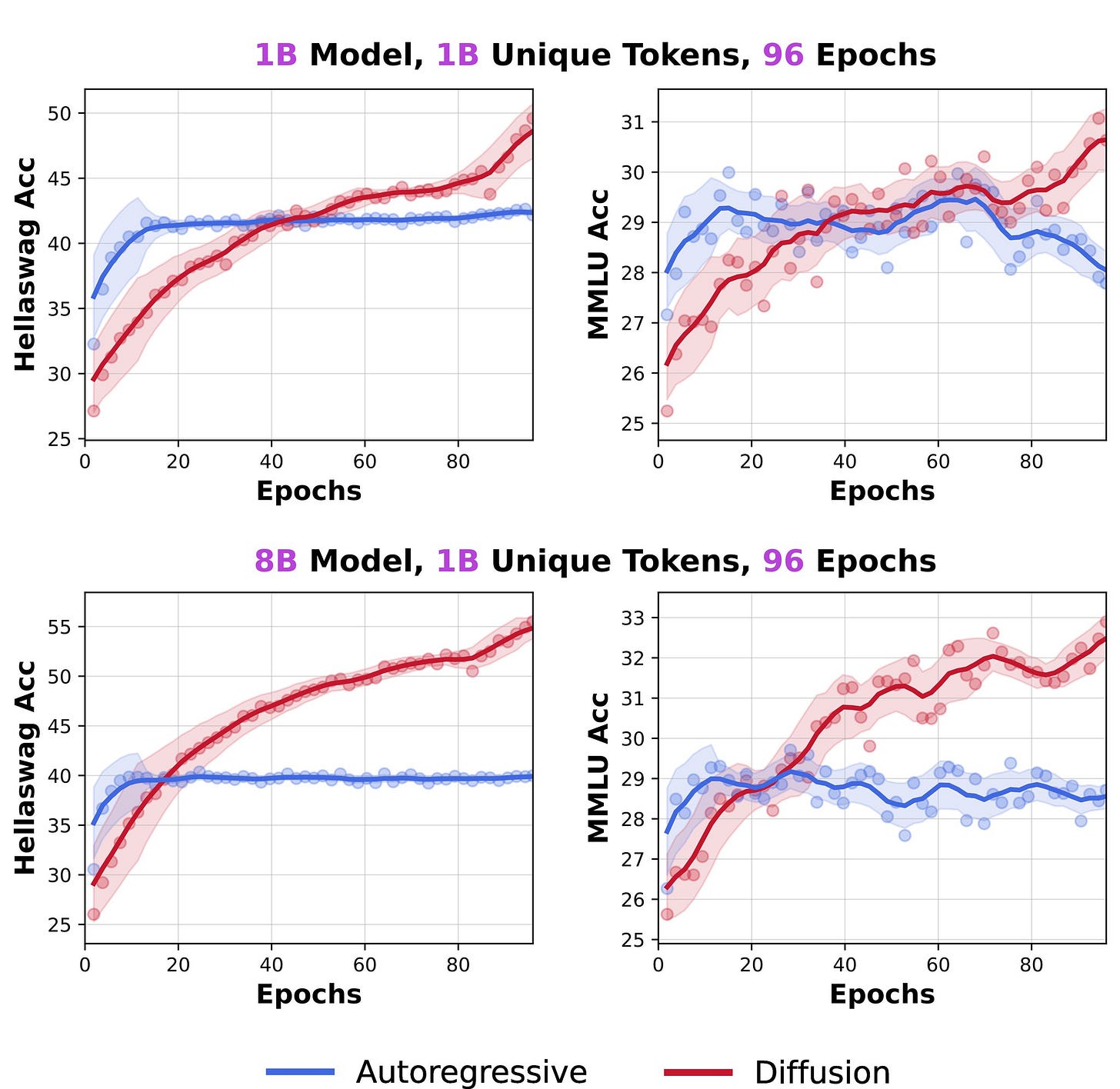

🤯 Token crisis breakthrough with diffusion models - 🔬 Jinjie Ni shared research demonstrating that diffusion language models (DLMs) outperformed autoregressive models when trained on limited tokens, achieving >3× data efficiency with a 1B parameter DLM trained on just 1B tokens showing promising results. (🙏 Fabien Niel)

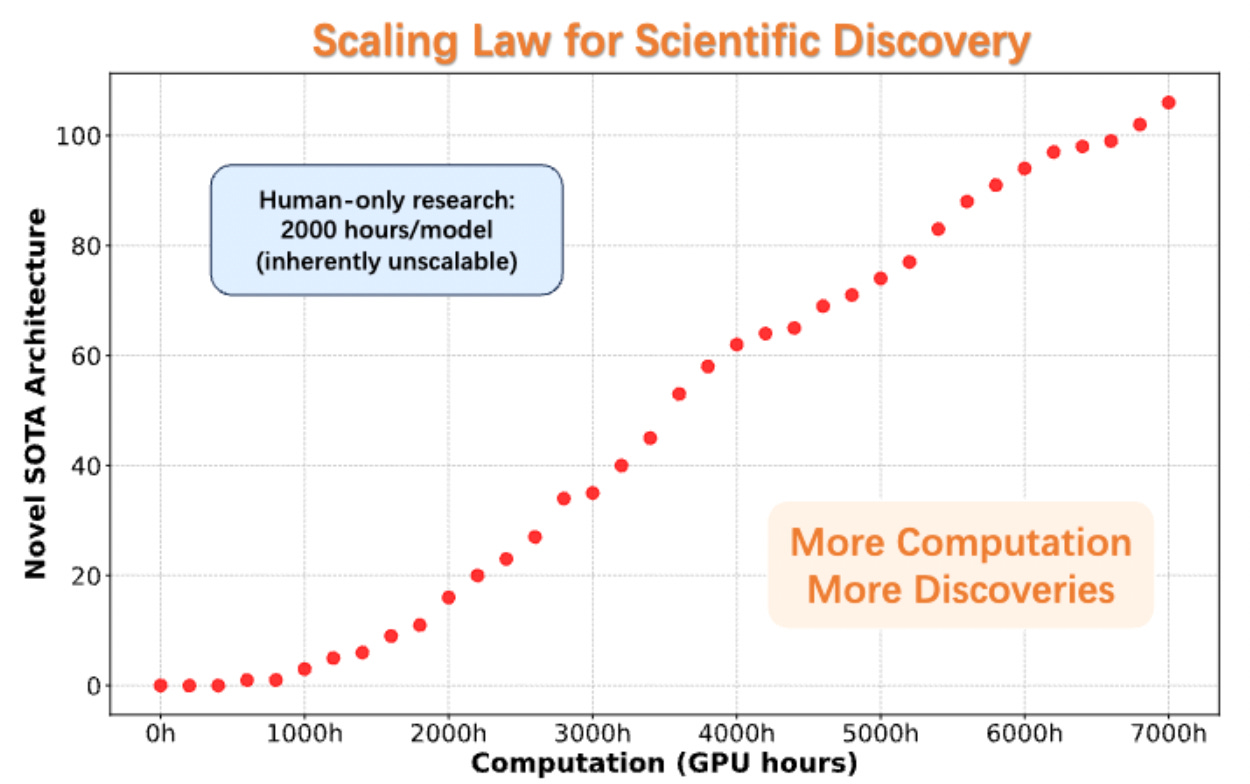

💡 AlphaGo moment for model architecture discovery - 🔬 AlphaXiv paper presented AI system that was fed hundreds of papers about neural network architectures and generated novel, unexplored ideas that converge faster. Community debate emerged about whether this represents genuine innovation or "p-hacking" with concerns about the underlying LLM capabilities. (🙏 Julien Seveno, Benjamin Trom @ Mistral AI, Raoul Ritter)

🔥 GPT-5 reception - Community feedback keeps coming, and indicates GPT-5 performs worse than GPT-4o for many tasks, slightly worse than o3-pro, with users describing it as "underwhelming" and experiencing long thinking times for simple tasks in coding applications. (🙏 Fabien Niel, Stan Girard, Louis Choquel, Gabriel Olympie, Pierre Chapuis, Benjamin Trom, Kevin Kuipers)

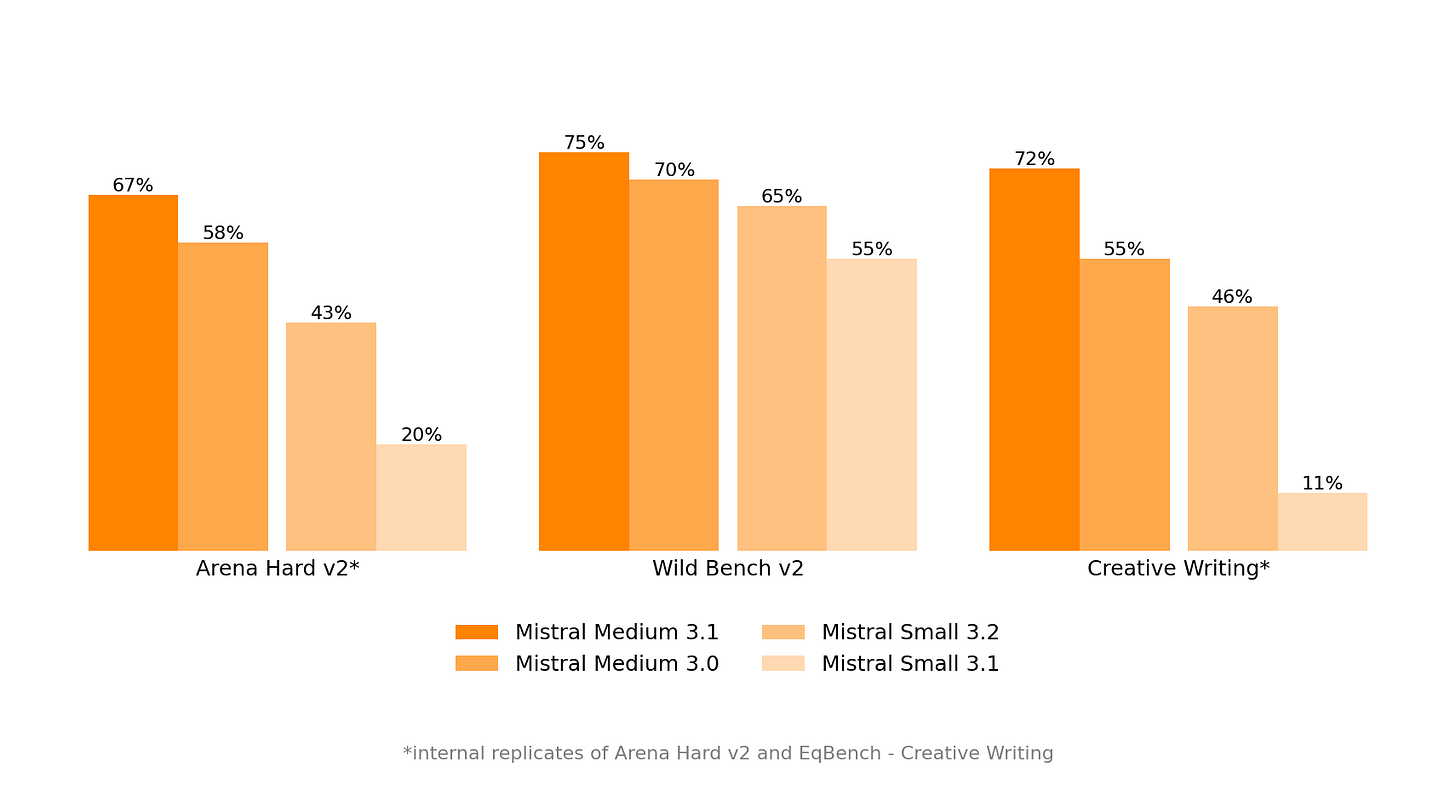

🔥 Mistral Medium 3.1 released - 📰 New version offers improved coding skills, better tone, and smarter web searches, though instruction following slightly degraded according to initial testing. (🙏 Benjamin Trom @ Mistral)

🫡 Gemma 3 270M model - Google released a tiny 270M parameter model with exceptional instruction following for its size. (🙏 Gabriel Olympie)

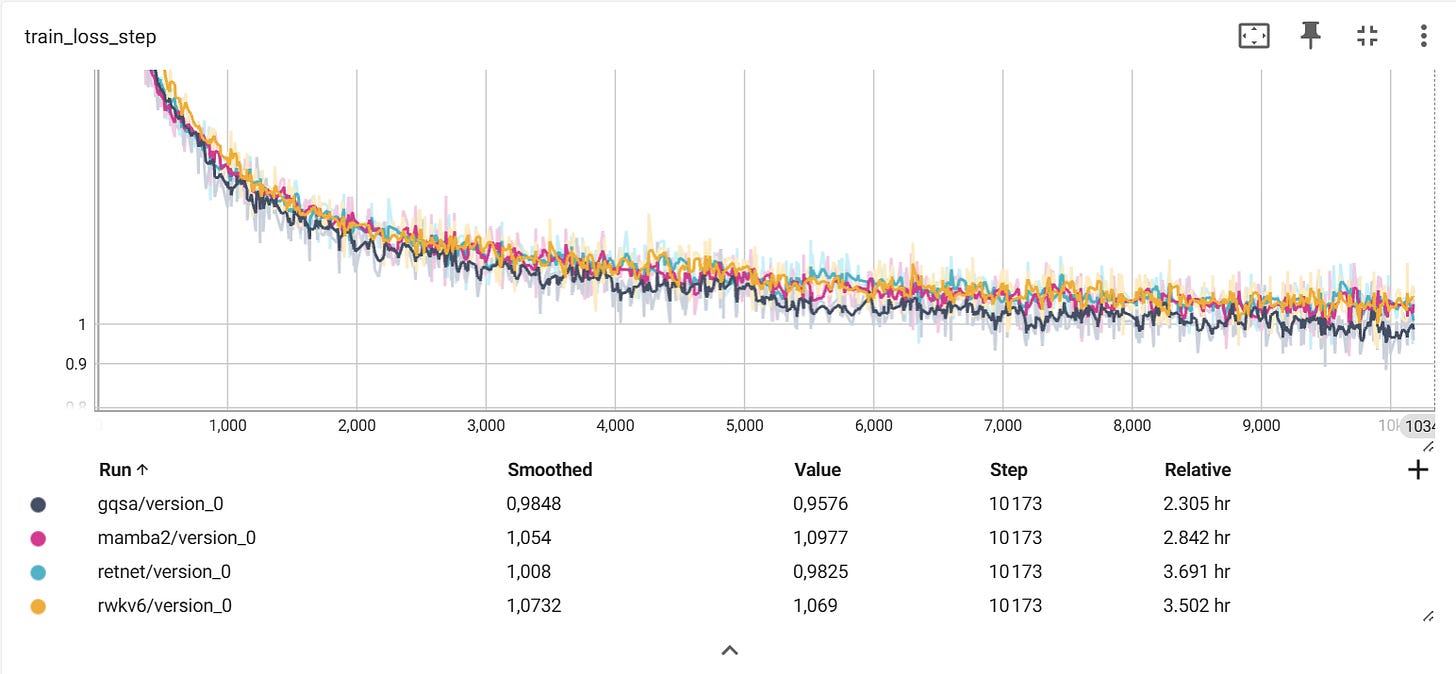

📈 ArchiFactory repository - Gabriel Olympie shared a training framework for small LLMs enabling comparison of various architectures (GQA, Mamba2, RWKV6, RetNet) with identical training setups on a single 3090 GPU.

Autonomous Agents

🛠️ MCP Install Instructions Generator - Alpic has released an 🛠️ automatic instruction generator for MCP servers supporting Claude, Cursor, Cline, VSCode, and other popular clients, solving the challenge of constantly changing installation procedures. (🙏 Nikolay Rodionov @ Alpic)

🔎 Linkup agents showcase - Jarek Ecke published 📰 a real-world business scenario for qualitative and automated agentic web search using Linkup (🙏 Boris Toledano @ Linkup)

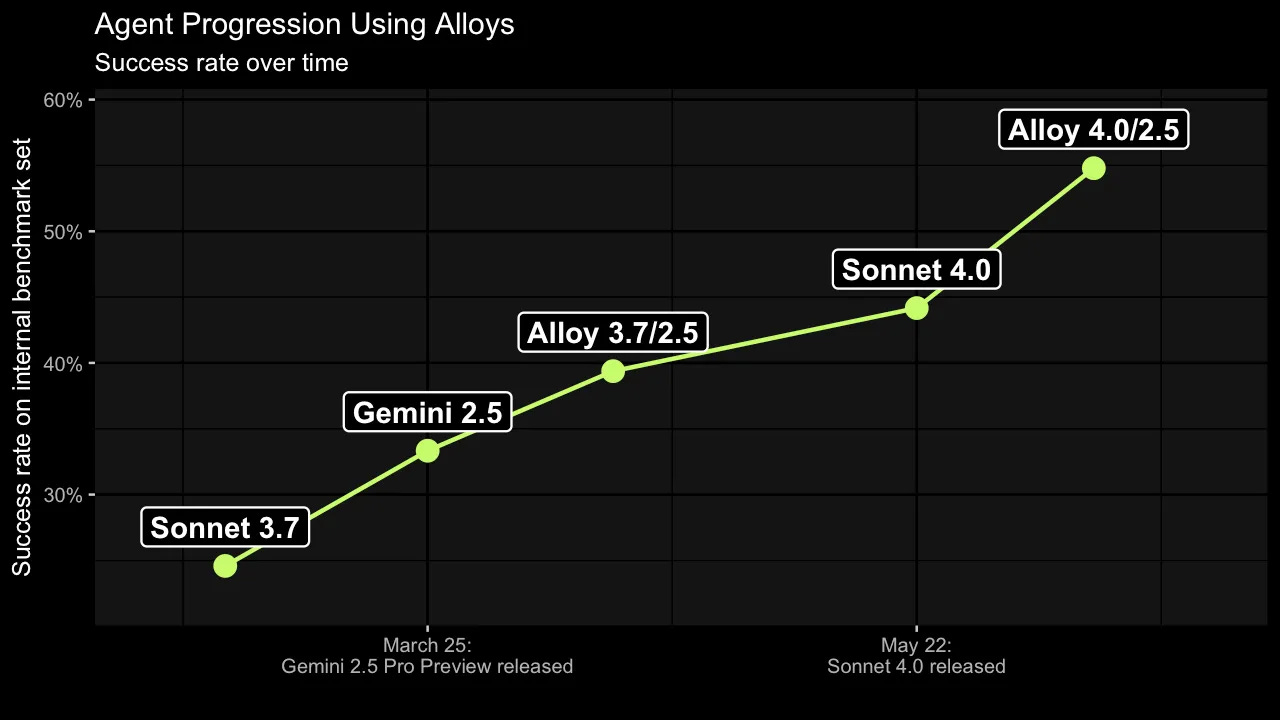

💡 Alloy agents innovation - 📰 XBOW demonstrated a novel approach using API-based foundational models to build unique agentic AI systems, showcasing how combining existing models can create powerful new capabilities. (🙏 Robert Hommes @ Moyai.ai)

Programming

💸 AI coding economics crisis - 📰 TechCrunch reports that AI coding tools have "very negative" gross margins, losing money on every user due to high compute costs. Kilo Code’s study show that future AI bills could reach $100k per year per developer based on current token growth trajectories. (🙏 Willy Braun @ Galion.exe, Arnaud Porterie @ Vibe.co)

☁️ Async vs sync coding agents debate - Google's Jules async agent 📰 works well for refactoring and bug fixing where requirements are clear, but synchronous tools remain better for interactive feature development. Others note that Devin is now way more efficient than Codex. (🙏 Anirudh Kulkarni @ Google Labs, Arnaud Porterie, Stan Girard, Robert Hommes)

🙈 Code quality concerns - Growing reports of AI-generated codebases becoming "spaghetti nightmares" that appear functional initially but reveal deep structural issues upon closer inspection. (🙏 Pierre Chapuis, Kemal Toprak Uçar @ Numberly)

🧰 Building Cursor - Cursor has grown 100x in load in just a year, sees 1M+ QPS for its data layer, and serves billions of code completions, daily. A deepdive into how it’s built with cofounder, Sualeh Asif (🙏 Youssef Tharwat)

🛠️ A few new Agents for coders - New HTTP API project provides unified interface for Claude Code, Goose, Aider, Gemini, and other coding assistants. Claudia is aiming at building a GUI on top of Claude Code.

Infrastructure

☘️ UK government's controversial water-saving advice - 📰 Tom's Hardware covered how UK officials suggested citizens delete old emails and photos to reduce data center water consumption during a national drought, sparking debate about the actual environmental impact of data storage versus processing. (🙏 Margaux Wehr, Kevin Kuipers @ Galion.exe, Pierre Chapuis @ Finegrain, Christophe Lesur @ Cloud-Temple)

Discussion revealed that 45% of cooling relies on evaporative air cooling, consuming massive water volumes daily, with only 15-20% using closed-loop systems and 22% using immersion cooling [sources: Lenovo, EESI].

Indeed sleeping data, even in a hot data storage environment, still consumes some energy and water, but very little. Most DC power consumption is driven by CPU/GPU (and of course, cooling.) Experts cited in the article may be correct: processing the data (such as by deleting it) could, in the long run, be worse than simply letting it lie dormant.

Liquid cooling is a growing trend, particularly to exceed 20 kW of power per rack. The main challenge, if we forgo front-door cooling systems, is adapting the infrastructure to the specific hardware in use. This requires direct collaboration between the vendor (Cisco, HP, Dell, etc.) and the data center.

Readings From The Community

Build: An Unorthodox Guide to Making Things Worth Making, Tony Fadel (2022)

Rationality: From AI to Zombies, Eliezer Yudkowsky (2015)

Obviously Awesome: How to Nail Product Positioning so Customers Get It, Buy It, Love It, April Dunford (2019)

Super Founders: What Data Reveals About Billion-Dollar Startups, Ali Tamaseb (2021)

The River of Consciousness, Adam Frankl (2024)

The Developer Facing Startup: Alchemist Accelerator’s go-to-market playbook for early-stage developer-facing startups, Adam Frankl (2024)

Amp It Up: Leading for Hypergrowth by Raising Expectations, Increasing Urgency, and Elevating Intensity, Frank Slootman (2022)

(🙏 Louis Choquel, Louis Manhes, Fabien Niel, Robert Hommes, Stephane Jourdan @ Anyshift)