Your Dose of Reg.exe, Week {29}

Coding agents transform development as developers delegate more. Training shifts from web scraping to synthetic environments. Models learn faster from detailed feedback than binary rewards.

Reg.exe is a global closed community of 260+ engineers, founders, and researchers interested in AI innovation, from San Francisco to Tokyo. Each week, we share the highlights of our discussions in a newsletter. If you’d like to join, write to join@welovesota.com

👉 Article originally posted on WeLoveSota.com

Events

🇫🇷 NVIDIA + Hugging Face + Mistral Developer Meetup (Feb 10th) - NVIDIA, Mistral, and Hugging Face will host an evening meetup in Paris for experienced practitioners building and deploying open models. (🙏 Ziv Ilan @ NVIDIA)

🇫🇷 GAIN Meetup #13: Securing AI Agent Data Flows - Workshop showcasing Guepard MCP in action, demonstrating how to create workflows for coding agents with instant database branching, version control, and time travel capabilities to prevent agents from corrupting databases. (🙏 Koutheir Cherni @ Guepard)

Audio

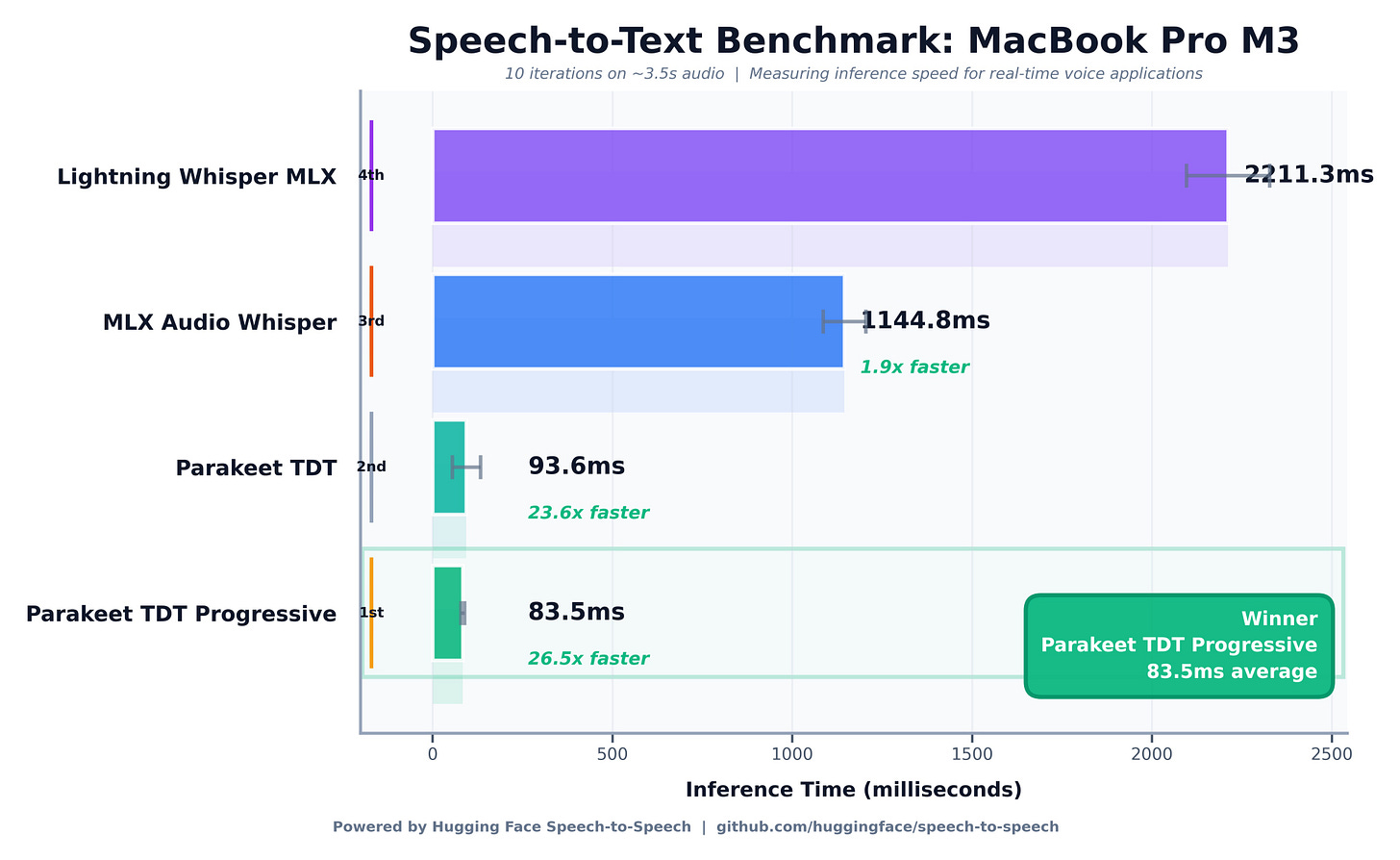

⚡ Nvidia Parakeet Achieves 26x Real-Time Transcription - Nvidia’s Parakeet transcribed 6 seconds of audio in 83ms on an M3 MacBook Pro, running 26x faster than Lightning Whisper, demonstrating that local real-time voice AI capabilities have arrived.

Autonomous Agents

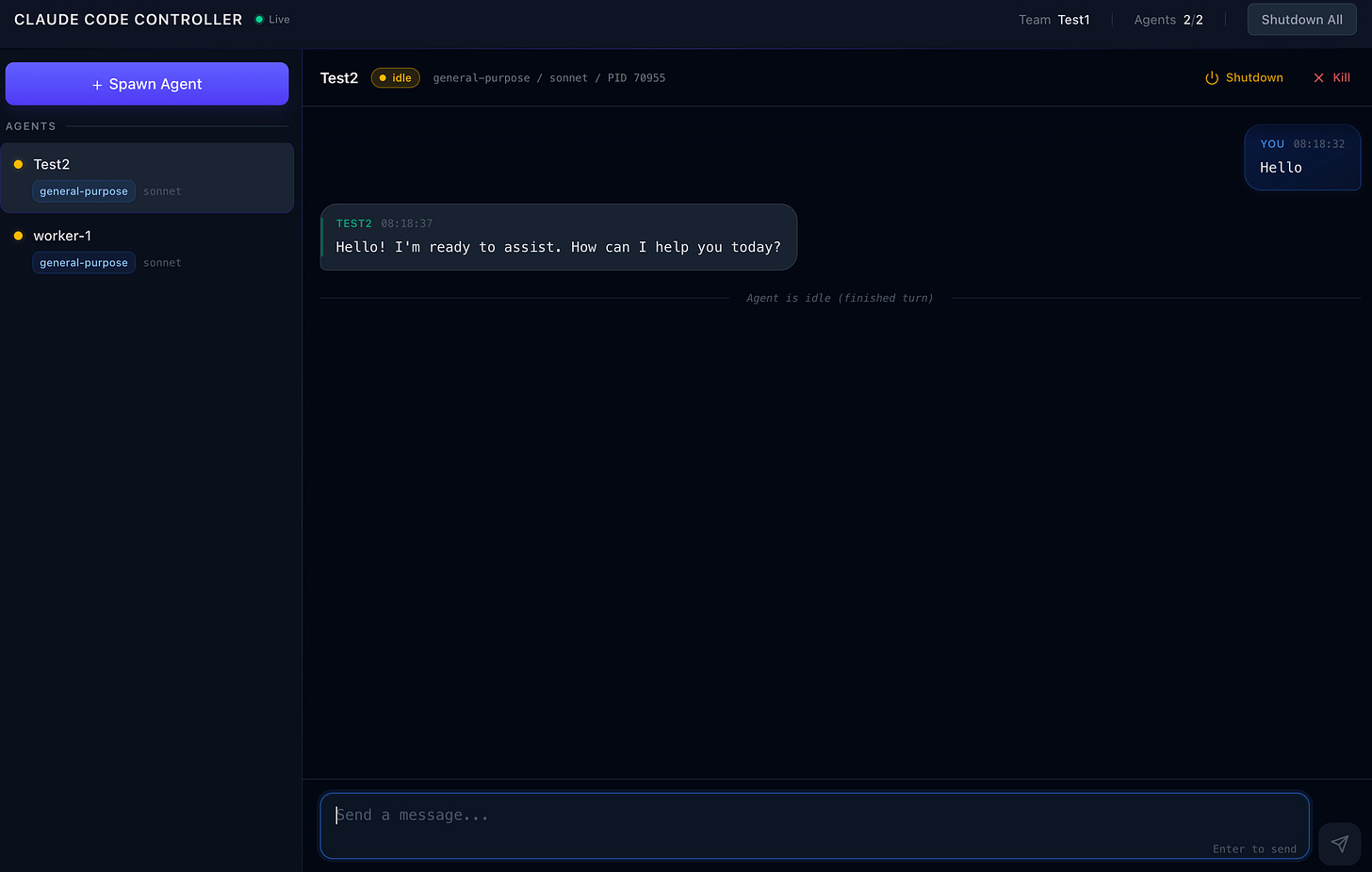

🛠️ Claude Code Controller Released - Stan Girard reverse-engineered Claude Code and released a programmatic TypeScript API to control Claude Code agents via the internal teams/inbox/tasks filesystem protocol. (🙏 Stan Girard @ Quivr)

Enables programmatic control of Claude Code agents through TypeScript API.

Works by interfacing with the internal filesystem protocol.

Opens new possibilities for automating agent workflows and task management.

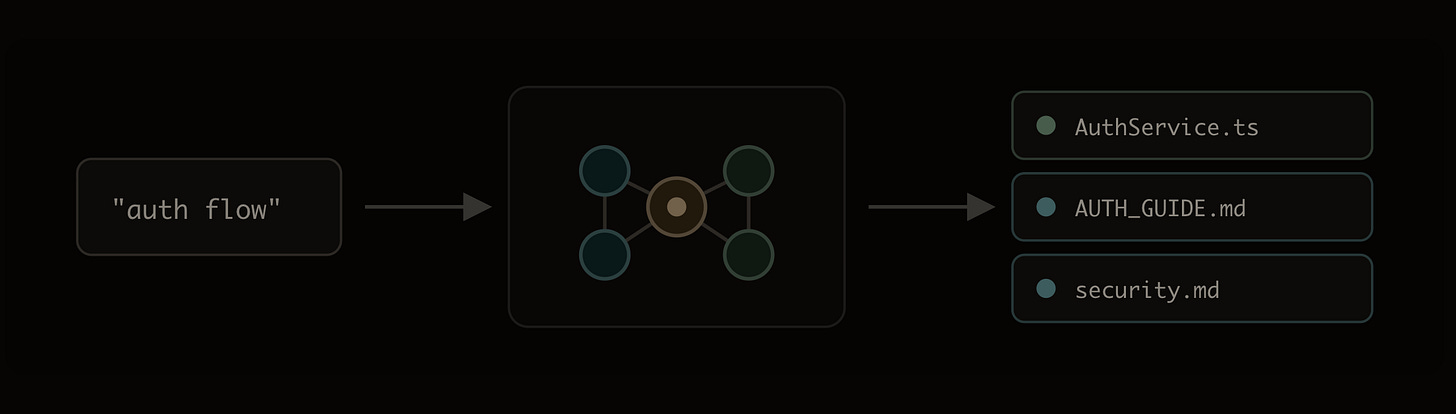

📰 Indexing Markdown Alongside Code for AI Agents - Noodlbox shared their approach to indexing markdown documentation and retrieving it at query time using hybrid search, addressing the challenge that stuffing everything into system prompts doesn’t scale for agent applications. (🙏 Youssef Tharwat @ Noodlbox)

Uses hybrid search to retrieve markdown documentation at query time rather than prompt-stuffing.

Treats code as cheap swappable artifacts while specifications become the valuable asset.

Implements doc sync to keep specifications as living documents that evolve with implementation.

🔥 Community take: Members had different takes on documentation strategies. Some prefer working software over comprehensive documentation, arguing that if developers can navigate a codebase, agents can too. Others counter that while code has become a cheap swappable artifact, specifications remain the valuable asset. Both sides agree static markdown files get outdated quickly, but differ on solutions: one camp advocates for minimal documentation, while the other pushes for linked, living specs that sync with implementation rather than rebuilding context from scratch on every task.

🦞 Peter Steinberger on OpenClaw: “I Ship Code I Don’t Read” - The Pragmatic Engineer featured Peter Steinberger discussing how he builds and ships like a full team by centering his development workflow around AI agents. (🙏 Margaux Wehr, Quentin Dubois, Kevin Kuipers)

🔥 Community take: Members are experimenting with OpenClaw across a range of use cases, but security remains the dominant concern. Some are limiting usage to low-sensitivity personal tasks, while others are exploring more serious applications. The main risk is that non-trivial workflows almost always require sharing credentials, which expands the attack surface. As always, there is a fundamental tradeoff between security and capability. Granting an agent broad access to the host machine theoretically enables extremely powerful workflows, but it is inherently unsafe. Each additional safeguard, such as sandboxing or running inside Docker, meaningfully reduces risk, but also restricts what the agent can see, control, or automate.

Biotech, Health, and Chemistry

🔬 Ginkgo Bioworks Autonomous Lab Achieves 40% Improvement - Ginkgo Bioworks announced their autonomous laboratory driven by OpenAI’s GPT-5 achieved a 40% improvement over state-of-the-art scientific benchmarks, demonstrating AI systems that autonomously design experiments and protocols. (🙏 Sophie Monnier @ InstaDeep)

🛠️ LabBench2 Released for Biology Task Evaluation - Future House released LabBench2, a comprehensive benchmark comprising nearly 1,900 tasks for evaluating AI agents on biology-specific tasks, providing a systematic way to assess agent performance in biological research contexts. (🙏 Sophie Monnier)

Image, Video & 3D

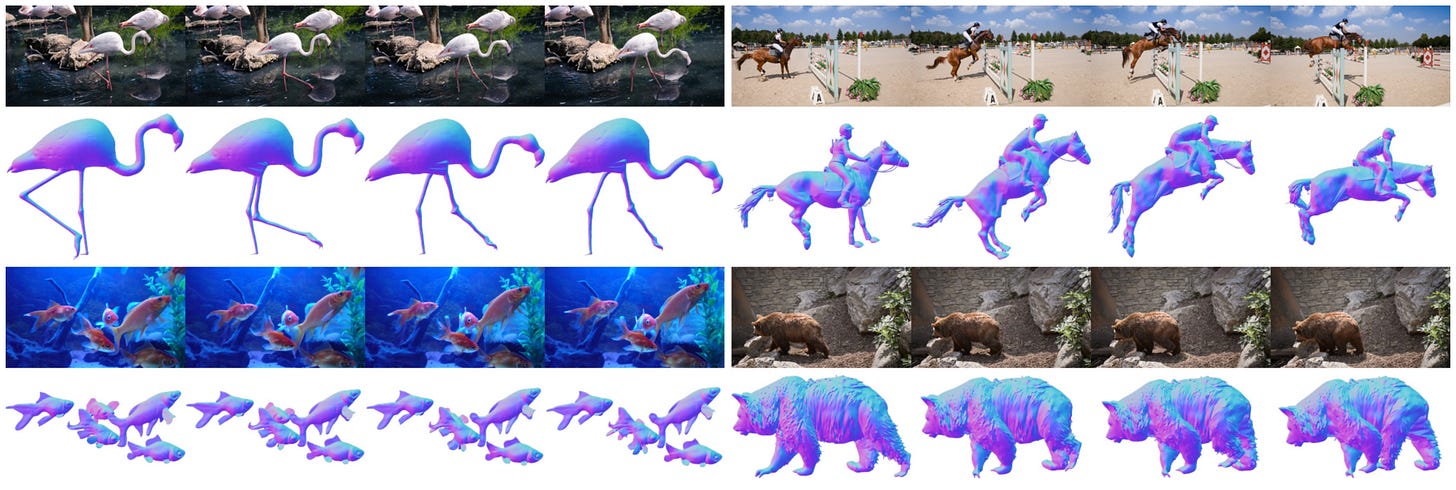

🔬 ActionMesh Generates Animated 3D Meshes from Video - Facebook AI Research introduced ActionMesh, a generative model that predicts production-ready animated 3D meshes from video in a feed-forward manner using temporal 3D diffusion, with a demo available on Hugging Face. (🙏 Kevin Kuipers)

Eliminates traditional optimization loops that make 3D reconstruction slow and resource-intensive.

Outputs include rigging and animation data, not just static geometry.

Enables rapid prototyping workflows for game dev, VFX, and virtual production teams.

🪞 Project Genie Mirror Tests Produce Hilarious Results - Testing mirrors in Project Genie revealed they don’t work correctly yet, producing amusing consistency failures as reflections diverged from expected physics, highlighting ongoing challenges in 3D world generation.

💬 Fashn VTON community take - Following last week’s release, Louis Choquel (Pipelex) said: “I tried the online service, it’s incredibly efficient. I was even able to run the open-source model on my Mac M3. It took a while, but it worked. Really impressive.”

Cyber

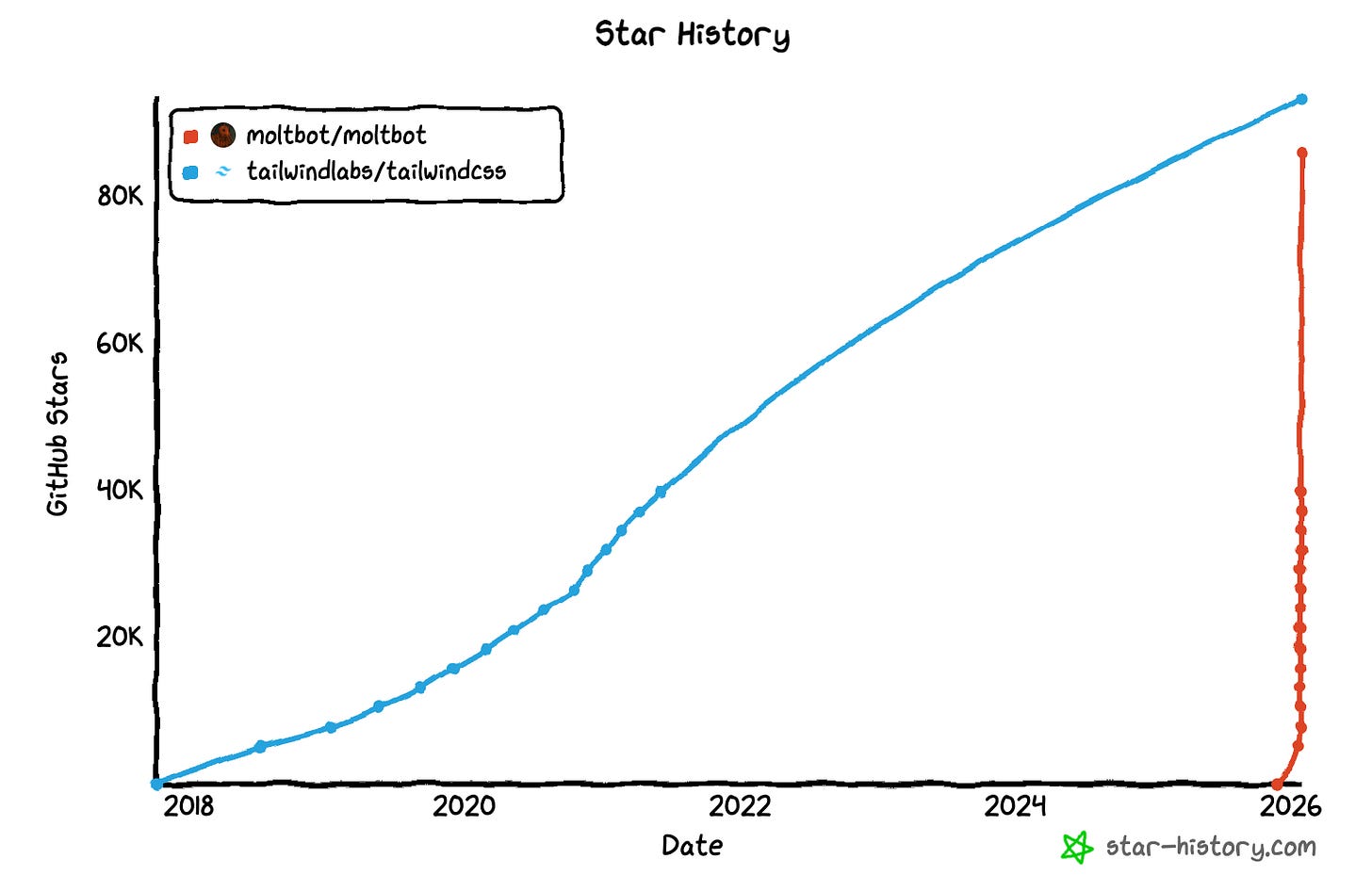

🚨 Moltbook Database Exposed API Keys Publicly - Security researcher Jamieson O’Reilly discovered Moltbook was exposing their entire database publicly with no protection, including secret API keys that would allow anyone to post on behalf of any agents, affecting high-profile users like Andrej Karpathy with 1.9 million followers. (🙏 Kevin Kuipers)

Language Models

📰 Synthetic Pretraining and Planning for Greatness - Pleias published insights from Pierre-Carl Langlais about the need to shift from passively scraping organic web data to actively designing large-scale synthetic training environments that shape model capabilities from the start, representing a fundamental change in pretraining philosophy.

Advocates moving from passive web scraping to active synthetic environment design.

Synthetic training environments can shape model capabilities more deliberately from pretraining.

Represents strategic shift in how reasoning models are developed for agentic AI applications.

MLOps

🛠️ Trackio-Tool for Managing Training Runs - Following the shutdown of Neptune and Trackio services, Finegrain released a tool to work with Trackio data files, particularly useful for running trainings on different machines (e.g., serverless) and comparing or merging runs in a single interface. (🙏 Pierre Chapuis @ Finegrain)

Operates entirely on local Trackio data files without requiring cloud service access.

Enables unified analysis of experiments split across cloud providers or hardware configurations.

Prevents vendor lock-in by maintaining access to training metrics after service discontinuation.

Programming

🔥 OpenAI Introduced Codex App - OpenAI launched the Codex app as their answer to Cursor, generating significant attention from developers. Early access users reported becoming addicted to the tool, though community members remained cautious about promotional content following GPT-5’s release. (🙏 Anselme Trochu @ UN)

🔥 Community take w/ Anselme: “I usually like Theo and I used to believed he was not ‘bribable’ but since the release of GPT 5, I’m more careful.”

📊 Claude Opus 4.5 vs GPT 5.3 Codex Benchmarking - Discussion emerged around comparing Claude Opus 4.5 and GPT 5.3 Codex (🙏 Pierre Chapuis, Kevin Kuipers)

🔥 Community take w/ Pierre Chapuis: He argues that current benchmarks are largely misleading, especially SWE-style evaluations with estimated scores and missing pass@N, and that providers cherry-pick benchmarks where they perform well, which is why he increasingly relies on the judgment of competent practitioners rather than metrics. Also, “it’s interesting that, in (his) view, both companies have now understood the trade-off between latency and token efficiency versus capability and reasoning depth. OpenAI keeps improving speed, since they were lagging far behind, whereas Anthropic is increasing depth but introduces an explicit effort parameter. In the Opus 4.5 vs GPT-5.1 comparison, (he) went with Opus because of speed and because (he) uses agents as a pairing partner, whereas Codex feels more like someone you delegate to and come back later to review the work. But these lines are changing fast, which means we have to spend time and money continuously testing both.”

🔬 Qwen3-Coder-Next Released - Alibaba released Qwen3-Coder-Next, a highly anticipated coding model that community members had been waiting for, building on the success of Qwen3-coder for easier and specific programming tasks. (🙏 Gabriel Olympie, Enrico Piovano @ Goji)

🔥 Community take w/ Gabriel Olympie: “Early feedback, based on the AWQ version tested on Android app development: overall quite good and seemingly on par with GLM 4.7 in terms of raw intelligence, but it takes more steps to achieve the same results, making very atomic edits. Tool use is consistent and reliable, and it can operate Claude Code without mistakes, even completing a roughly 100-step sequence without errors. It is not very strong at UI design, so descriptions and specifications need to be very precise, otherwise the results are unattractive. The model is also quite stubborn: once it commits to a direction, it tends to stick with it even if it is wrong, making it highly sensitive to initial conditions and context. On a single RTX 6000 Pro, running with a 128k context window, it supports up to 13× concurrency (about 6.55× with a 256k context). Prompt evaluation runs at roughly 8,000–12,000 tokens per second, with generation around 150 tokens per second.”

📰 Claude Code’s /insights Command Deep Dive - Analysis revealed how Claude Code’s insights command works, with community members sharing mixed experiences depending on usage patterns. (🙏 Marie Thuret @ Tigo Labs)

🔥 Community take w/ Marie Thuret: “There are a lot of stats being shared. They try to surface features you might not be aware of and suggest additions to CLAUDE.md. On my side, though, there’s an overweighting of recent usage: it made a big deal out of one set of tasks I did today, which skews the advice in my view. It also apparently didn’t like that I was using it only in planning mode and then switching to another tool for implementation. The file is also too long; in this case, less is definitely not more. Maybe it works better with more typical usage patterns.”

Also:

💡 Agentic Engineering Practices - Comprehensive guide on engineering practices for working with AI agents, covering patterns and approaches for effective human-agent collaboration in software development workflows.

💡 Claude Code Tips from the Team - Boris Cherny shared tips sourced directly from the Claude Code team on how they use the tool differently, emphasizing there’s no single right way to use Claude Code and encouraging developers to find their own optimal workflows.

🛠️ Skills.sh Agent Skills Directory - Centralized hub launched for discovering and installing skills for AI agents, providing a simple way to upgrade Claude Code and other agents with new capabilities including skill-creator and vercel-react-best-practices. (🙏 Antoine Sueur @ Pletor)

🔥 Community take w/ Antoine Sueur: “The skill-creator and vercel-react-best-practices are quite useful. I’m checking the mcp-builder next, although it feels like it’s missing some more recent best practices.”

🔧 Antigravity IDE Improvements - Antigravity has improved significantly since launch and is appreciated for its agentic behavior, including solid browser interaction and strong performance when designing new projects from scratch. However, it is less effective during iterative work, particularly when fixing specific issues. Overall, it’s promising, and the generous free-plan credits make it easy to try. (🙏 Enrico Piovano @ Goji)

🔬 Building a C Compiler with Claude - Anthropic Engineering published insights on building a C compiler with agents. The article demonstrates advanced compiler construction techniques and Claude’s capabilities for complex systems programming tasks. (🙏 Thomas Walter @ Theodo)

🛠️ Coding Agent VMs on NixOS with microvm.nix - Guide on creating secure environments for coding agents using microVMs on NixOS, addressing the tedium of reviewing each command agents want to run and providing sandboxed execution environments. (🙏 Quentin Dubois)

Reinforcement Learning

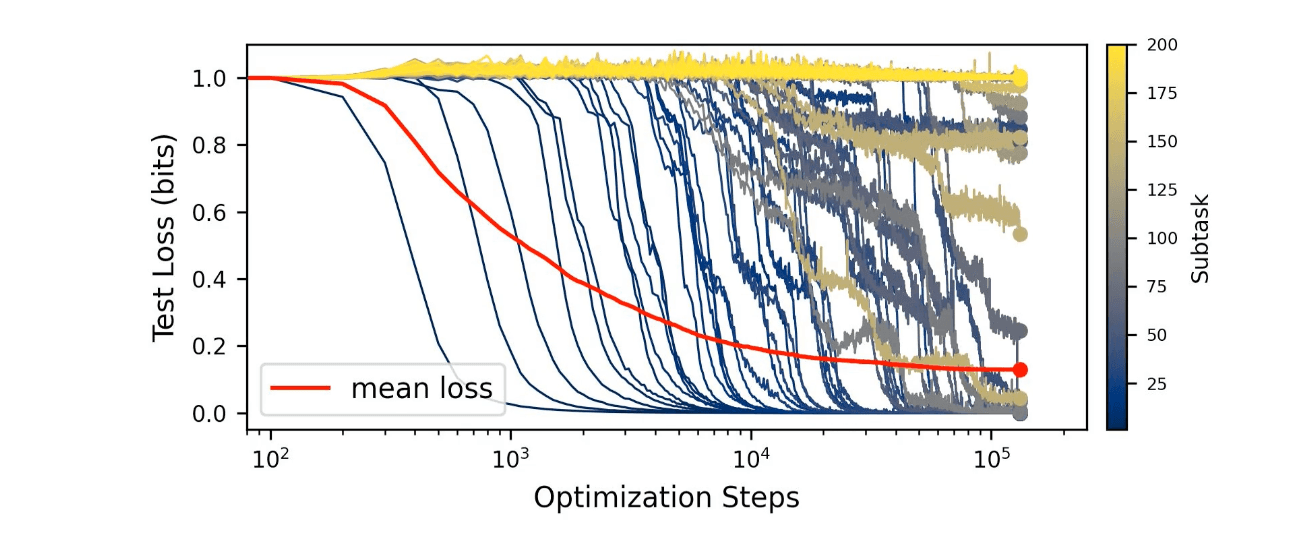

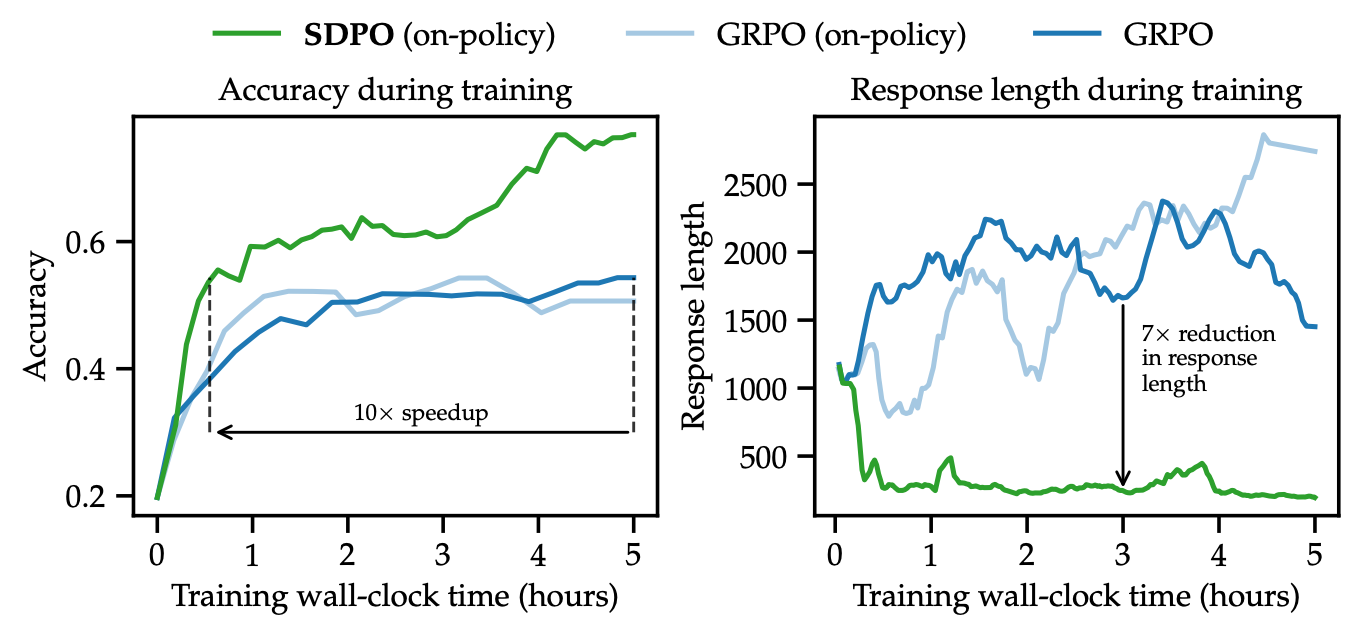

🔬 Reinforcement Learning via Self-Distillation - Researchers introduced a new post-training method as an alternative to RLVR, where the reward signal consists of rich textual feedback rather than binary success/failure, addressing the severe credit-assignment bottleneck in current reinforcement learning approaches. (🙏 Guillaume @Andromede)

The model learns from its own mistakes by using error messages and feedback to teach itself what went wrong, eliminating the need for separate teacher models or reward systems.

Requires fewer training examples to reach higher accuracy across scientific reasoning, tool use, and competitive programming benchmarks compared to traditional binary reward methods.

During inference, solves difficult problems with 3x fewer attempts than standard approaches like generating multiple solutions and picking the best one.

Robotic, World AI

❤️ Humanity’s Last Machine Deep Dive - A visually stunning website and a comprehensive resource on humanoid robotics hardware and the technologies shaping our future, covering the current state of humanoid development and safety considerations around human–robot interaction.

New Members

🇫🇷 Gabrielle Prat (Alan) - Staff Data Scientist at Alan building fraud detection systems and an agentic platform to automate fraud investigation operations. Stack includes SageMaker, Python, Snowflake, and GenAI/agentic frameworks. Has many crazy fraud stories to share.

🇫🇷 Marie Thuret (Tigo Labs) - Co-founder & CPO at Tigo Labs building Simone, an AI coach rooted in cognitive science and Theory of Mind. Full-stack developer turned Product with 6 years at Algolia working on Developer Experience and AI/ML for search relevance.

Loving Humanity’s Last Machine ! Thanks for sharing :)