Your Dose of Reg.exe, Week {25} - HNY🎇

DeepSeek's mHC architecture and Hooker's adaptation thesis challenge pure scaling. Major releases: Boltz-Pfizer biotech platform, LTX-2 video generation, DeepMind's geospatial AI.

Reg.exe is a global closed community of 260+ engineers, founders, and researchers interested in AI innovation, from San Francisco to Tokyo. Each week, we share the highlights of our discussions in a newsletter. If you’d like to join, write to join@welovesota.com

👉 Article originally posted on WeLoveSota.com

Events

🇫🇷 Engineering Night #11 Bio Edition in Paris (January 28) - Dust organized a specialized engineering gathering focused on biology and AI. The event featured a speaker from InstaDeep and offered deep technical talks combined with networking over beers and pizzas. (🙏 Jules Belveze, Sophie Monnier)

Autonomous Agents

🏙️ Skybridge framework released for building ChatGPT apps - Alpic released the first open-source framework adding missing DX features to the OpenAI Apps SDK, including type safety, easy testing, HMR, React-Query style hooks, and UI-to-LLM sync. (🙏 Nikolay Rodionov @ Alpic)

Biotech, Health, And Chemistry

🤝 Boltz announced partnership with Pfizer and $28M seed round - The company launched Boltz Lab to frontier AI models and a cloud platform to design better small molecules and proteins. They pair open high‑performing models with scalable infrastructure so scientists get optimized candidates in hours, not weeks. (🙏 Sophie Monnier @ InstaDeep)

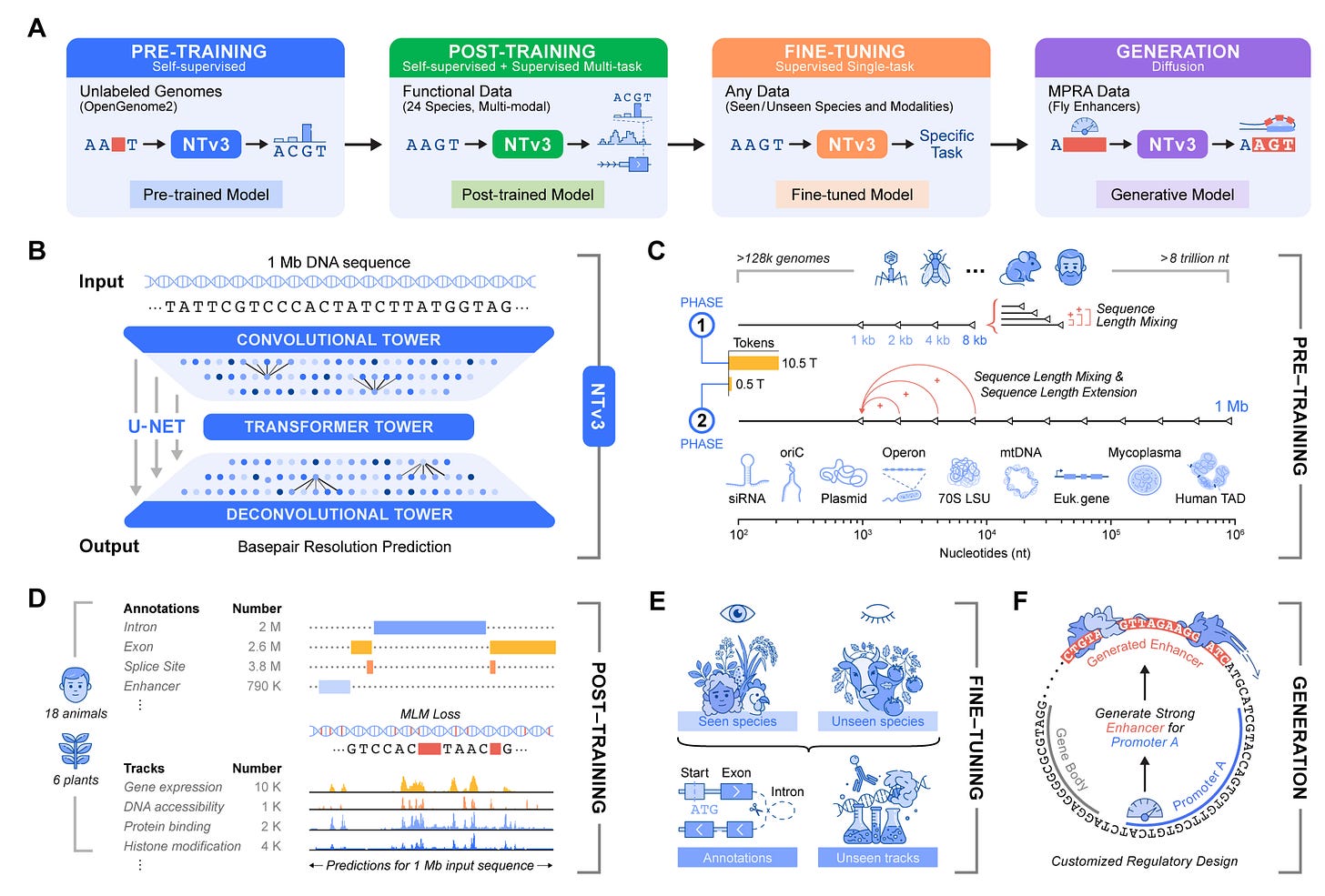

🧬 InstaDeep released Nucleotide Transformer v3 - Nucleotide Transformer v3 (NTv3) is a powerful AI model trained on DNA from many species that can read very long stretches of DNA, predict how they behave in cells, and even design new DNA switches (enhancers) with chosen activity levels, all within a single, shared system. (🙏 Ihab Bendidi @ Recursion)

🔥 OpenMed celebrated six months of building production-ready medical AI - The platform achieved significant milestones in healthcare NLP, offering accurate medical solutions without vendor lock-in or compliance challenges. The company focused on making medical AI accessible and cost-effective for healthcare organizations. (Congrats Maziyar Panahi!)

Image, Video & 3D

🍿 LTX-2 launched as production-grade video generation model - The video model from Lightricks claims to offer precise control, native 4K, high frame rates, and proven performance for long-form creative tasks. (🙏 Kevin Kuipers, Samuel McFadden @ Vid2Scene, Pierre Chapuis @ Finegrain - And thanks to Mathieu K. for the heads-up)

🔥 Community take: Feedback raised questions about prompt adherence compared to WAN, though noting faster generation speeds and reasonable model size for training LoRAs.

📱 Finegrain launched iOS demonstration app for on-device image editing - The company shipped a TestFlight app featuring object erasing and shadow generation capabilities, with additional features including object insertion, background removal, and light management coming soon. The SDK will be released alongside new capabilities. (🙏 Pierre Chapuis @ Finegrain)

🌠 3DGS automatic LoD generation tool released - The CPU-based, retraining-free tool from Vid2Scene decimated 25M Gaussian scenes to 500K in under 10 minutes, solving common deployment issues with large Gaussian Splat scenes for VR and standard hardware. The tool will be integrated into the vid2scene API. (🙏 Samuel McFadden)

🕹️ Microsoft released TRELLIS.2 for 3D generation - The model offered native and compact structured latents for 3D generation tasks, advancing the state of open-source 3D content creation. 🔬 Paper (🙏 Kevin Kuipers)

Language Models

🔬 DeepSeek published Manifold-Constrained Hyper-Connections paper - The mHC architecture expanded residual stream width while maintaining stability through mathematical guardrails, delivering consistent accuracy gains with minimal added cost. The approach turned single-lane information flow into multi-lane highways while preventing model instability. (🙏 Willy Braun)

🔥 Community take w/ Julien Seveno and Benjamin Trom: The reactions are largely positive but measured. Everyone agrees that DeepSeek keeps producing strong papers, and that this one stands out for its rare combination of high-level math and very concrete low-level engineering, which makes it dense but also impressive.

There is some skepticism about whether these architectural optimizations are the modern equivalent of feature engineering, potentially becoming less relevant as models scale. That said, this concern is tempered by the idea that the real objective today is to decouple parameter growth from compute cost, keep gradients stable, and avoid representation drift. Seen this way, the paper fits squarely with current scaling constraints rather than fighting them.

Another key takeaway is that the paper reflects how AI research actually happens in practice: intuition and engineering first, math later as a formal justification. The consensus is that the idea likely emerged from practical frustrations with residual stream expressiveness and stability, then was formalized mathematically and optimized at the kernel level. Overall, even if incremental, the work is valued for its execution quality and its very realistic research style.

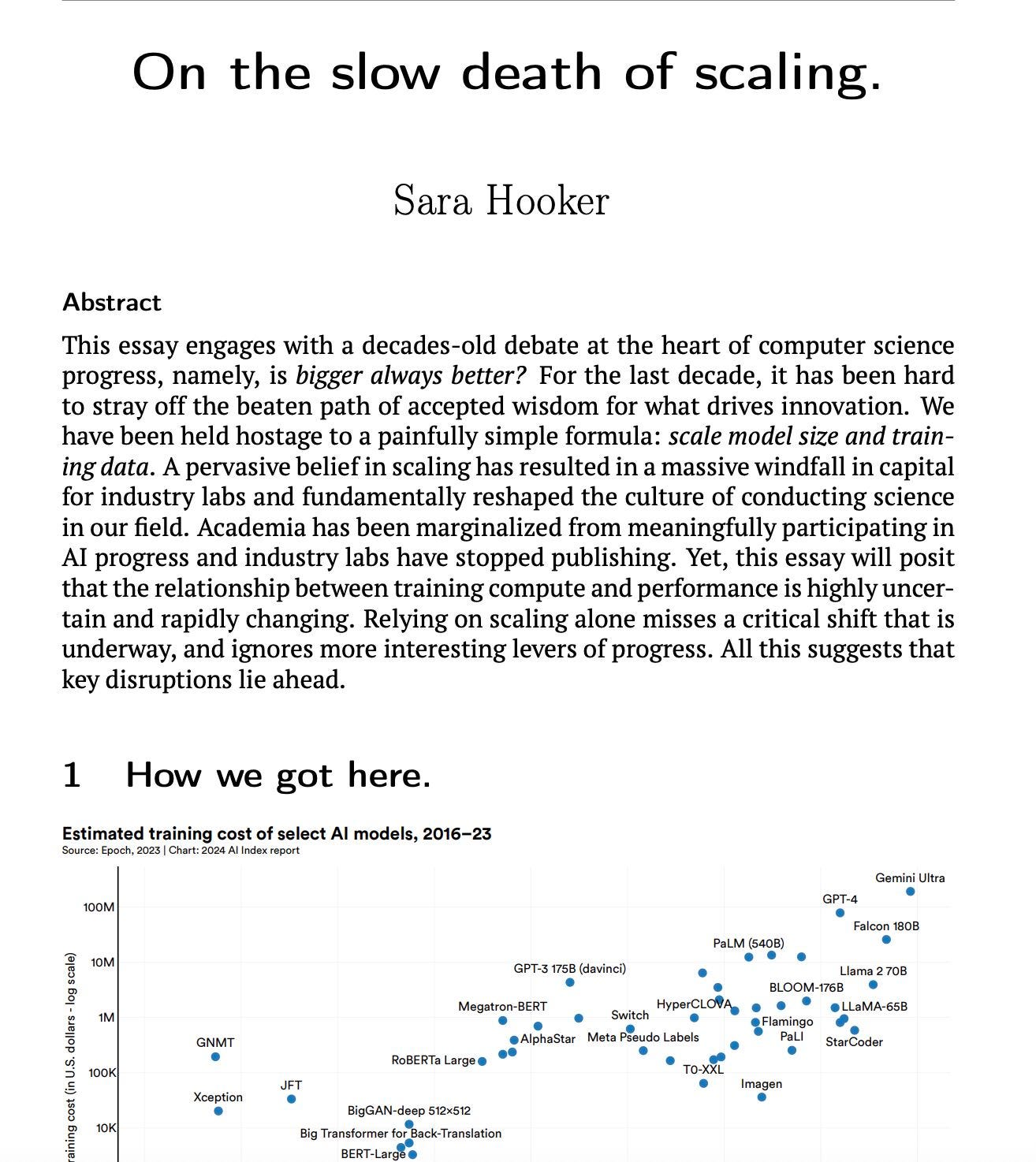

📈 Why adaptation is the next driver of AI progress - The piece from Sara Hooker argues that the long-dominant belief that progress in AI comes primarily from scaling training compute is increasingly questionable. The link between more compute and better performance is becoming uncertain, with growing evidence of diminishing returns, especially as models spend large amounts of compute learning rare, long-tail features. Instead of continued brute-force scaling, the author suggests that the biggest opportunities now lie in new learning spaces and in how models learn, adapt, and incorporate new knowledge from their environment. This shift also changes what is most interesting to work on as a computer scientist, and personally motivated the author’s decision to focus on adaptation rather than scale. (🙏 Victoire Cachoux)

Mlops

🔎 MCP Reticle debugger released - LabTerminal launched tool for intercepting, visualizing, and profiling JSON-RPC traffic between LLMs and MCP servers in real-time with zero latency overhead. (🙏 Robert Hommes @ Moyai)

✖️ Tensormesh introduced for inference acceleration - The platform slashed AI inference costs and latency by up to 10x with enterprise-grade caching using LMCache technology, developed by University of Chicago researchers. (🙏 Robert Hommes @ Moyai)

Programming

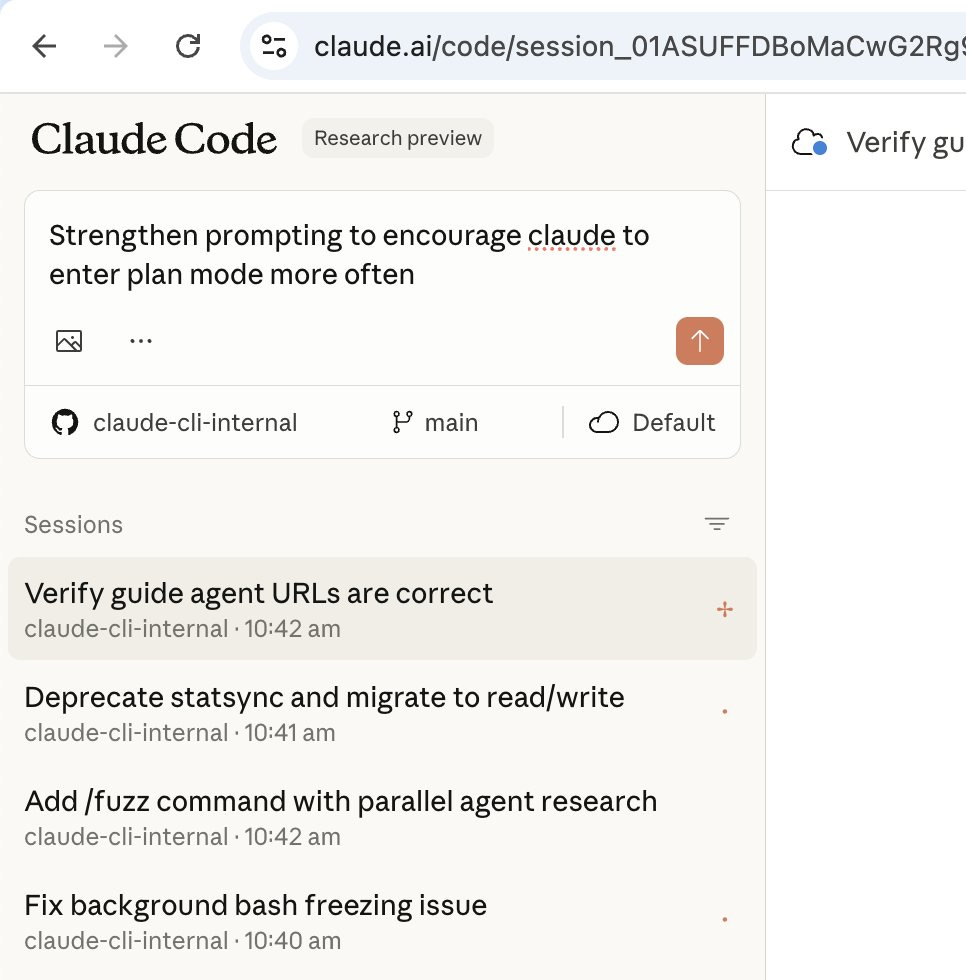

🍜 Noodlbox achieved 91% reduction in bash calls for Claude Code - After nearly two years of development, the deterministic context retrieval tool demonstrated empirical success on SWE Bench tasks using Claude Code Sonnet 4.5. The platform showed significant improvements in agent performance by providing better tools for context discovery. Plans include running full SWE Bench on Opus, Sonnet, Haiku and Codex 5.2. (🙏 Youssef Tharwat @ Noodlbox)

⚙️ Boris Cherny shared Claude Code setup - The creator of Claude Code demonstrated surprisingly vanilla setup for Claude Code, emphasizing that the tool works great out of the box with minimal customization needed. (🙏 Quentin Dubois @ OSS Ventures)

Also:

⚡️ HGMem released for RAG improvement - Implementation of hypergraph-based memory for improving multi-step RAG with long-context complex relational modeling became available.

📺 15-year-old screen sharing tech outperformed modern H.264 pipeline - Helix ML discovered that JPEG screenshots delivered better results than their WebCodecs H.264 pipeline for large-scale screen sharing deployment, demonstrating sometimes simpler solutions work better.

Robotic, World Ai

🌎 AlphaEarth launched as planetary-scale geospatial AI - Google DeepMind’s foundation model combined petabytes of earth imagery and location information in 64-dimensional embeddings for every area on earth, enabling unified dataset for understanding global patterns. Thanks to Yvan Barbot from Terra-Lab for the tutorial for accessing the model through Google Earth Engine. (🙏 Yvann Barbot)

Other Topics

🧠 Research paper explored computational bounds of learning - Study examined fundamental questions about extracting information from data, whether new information can be constructed from deterministic transformations, and evaluating learnable content without downstream tasks. (🙏 Julien Seveno)

🎙️ Replika discussed trust in AI platforms with Lior Oren - Podcast episode explored trust as an operating metric in AI companions, examining how trust functions in human-AI relationships. (🙏 Lior Oren @ Replika)

🗳️ Skreen launched for private social media history review - Platform enables users to privately analyze their social media archives (LinkedIn and X) entirely in browser, with no accounts, storage, or tracking. All data processing happens locally on user’s device. (🙏 Tejas Chopra @ Netflix)

📚 Book recommendation shared - “What Is Intelligence?: Lessons from Evolution, Computing, and Minds” by Blaise Aguera y Arcas discussed connections between AI advancement and the predictive brain hypothesis.(🙏 Kevin Kuipers via Willy Braun)

Our New Members

🇫🇷 Jérémie Bordier (XHR) - CEO at XHR building tech and AI for the gaming industry. Former international-level Quake 3 player.

🇫🇷 Quentin Dubois (OSS Ventures) - CTO at OSS Ventures building software companies for manufacturing. Launched 30 companies in last 6 years, lived in US, Spain, Mexico and Brazil. Loves skiing and long bike trips.

🇫🇷 Khaled Maâmra (Edgee) - Research Scientist at Edgee working on AI gateway at the edge for more efficient LLM usage. Background in mathematics and computer science, previously worked in public research, financial sector and manufacturing.

🇯🇵 Victoire Cachoux (Iktos) - Senior Application Scientist at Iktos working on AI and robotics for small molecule drug discovery. Studied maths and physics, worked as researcher in bioinformatics. TT-RPG Game Master living in Osaka, Japan. Loves concerts (metal and hard rock), exercising in nature, reading, and cooking.