Your Dose of Reg.exe, Week {24}

GPT-5.2 shows frontier performance with mixed reviews. scPRINT-2 advances single-cell genomics. Yann LeCun raises €500M for world models. Gemini 3 Flash tops agentic benchmarks.

Reg.exe is a global closed community of 260+ engineers, founders, and researchers interested in AI innovation, from San Francisco to Tokyo. Each week, we share the highlights of our discussions in a newsletter. If you’d like to join, write to join@welovesota.com

👉 Article originally posted on WeLoveSota.com

Autonomous Agents

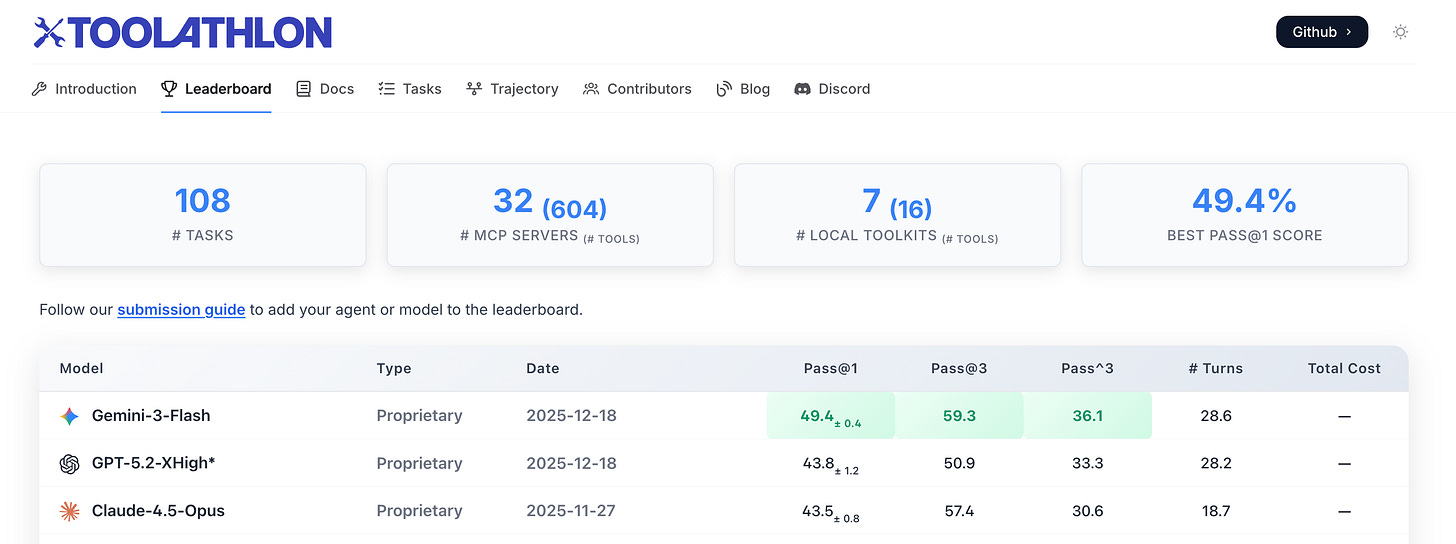

🏆 Gemini 3 Flash Tops Toolathlon Leaderboard - Gemini 3 Flash ranks first on Toolathlon benchmark for agentic tool-use capabilities, reflecting Google’s sustained investment and progress in reinforcement learning and agent execution.

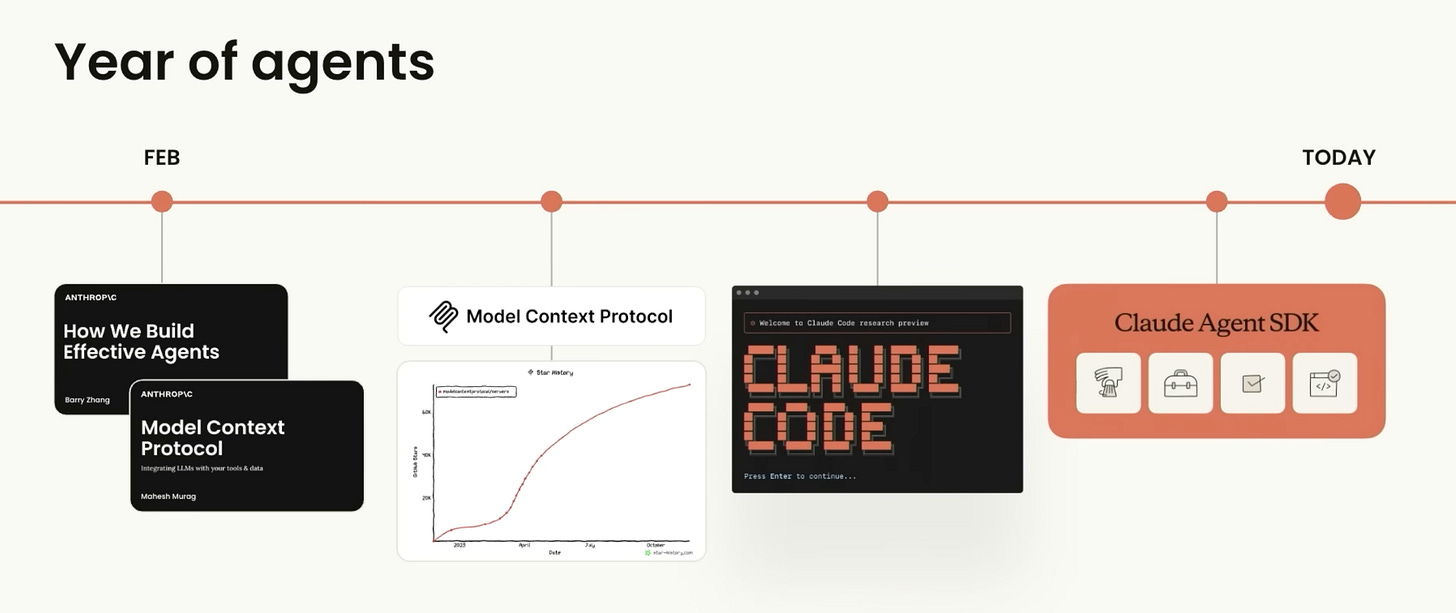

🧠 Don’t build agents, build Skills instead - Anthropic researchers Barry Zhang and Mahesh Murag presented their approach to agent development. Key takeaways include:

Build skills, not monolithic agents: agents should orchestrate skills rather than contain all logic.

Skills are small, well-scoped, with minimal standardized interfaces so they can be reused and composed.

Complex behavior should emerge from chaining skills, not from large, brittle agent graphs.

Keep infra concerns (routing, retries, monitoring) generic and separate from domain-specific skill behavior.

Design skills so they can be tested, observed, and improved independently of the overall agent system.

Aim for agents that can create, refine, and generalize new skills from their own trajectories and feedback.

Over time, a growing skill library compounds value, letting new workflows start from rich existing capabilities.

😏 Structured outputs create false confidence - Analysis from BAML argued that provider-level structured outputs systematically degrade response quality compared to free-form text plus parsing. The constrained decoding approach forces models to prioritize output conformance over output quality. Community members confirmed experiencing this issue in production. (🙏 Vaibhav Gupta @ BAML, Lior Oren)

🧰 DeepCode: open agentic coding - DeepCode is an open-source multi-agent coding system that turns research papers and natural language specs into production-ready code for algorithms, web frontends, and backends. (🙏 David Martins Gonçalves @ Notify)

🔥 Community take: A few members working in agentic are planning to try it very soon.

🛠️ Anthropic Tasks Mode coming with multiple starting points - Anthropic will add 5 different starting points to its upcoming Tasks Mode: Research, Analyze, Write, Build, and Do More. Features include granular controls and a new sidebar for tracking tasks’ progress and working with Claude’s context.

🤖 OpenAI quietly adopting Skills - OpenAI began implementing skills in ChatGPT and Codex CLI, following Anthropic’s approach.

Skills are folders containing a

skill.mdfile plus optional code/resources that define how the model should operate in a specific domain.The model explicitly “reads”

skill.mdat the start of a task and then follows the documented multi-step procedure as a reusable playbook.Skills provide a lightweight, file-based way to give models durable, tool-aware procedures that are more robust and reusable than ad hoc prompts.

Biotech, Health, And Chemistry

🔥 scPRINT-2 Foundation Model Released - Community member Jeremie Kalfon released scPRINT-2, a 20M-active-parameter single-cell foundation model trained on 350M cells across 16 species, 300 tissues, and 500 cell types. The model represents a significant advancement in single-cell genomics foundation models. 🔬 Paper Announcement (🙏 Jeremie Kalfon, Leonard Strouk)

Denoising-style pretraining with unnormalized counts and auxiliary classification targets consistently outperforms masked modeling and heavily normalized inputs.

Dataset diversity across organisms, tissues, and assays provides larger gains than simply adding more cells, especially when combined with augmentations like variable context and meta-cells.

Architectural changes such as ESM-based gene tokenization, graph-based neighbor encoding, and XPressor-style compression jointly improve both denoising and downstream embedding quality.

The model supports parameter-efficient fine-tuning that matches or exceeds supervised baselines and can correct expert misannotations while remaining fully open in data, code, and weights.

🌍 Bioptimus Unveils M-Optimus World Model - Bioptimus announced their new multimodal model M-Optimus, representing a transformative step toward AI-powered virtual tissues, patient digital twins, and a new era of data-driven biomedical research. (🙏 Sophie Monnier @ InstaDeep, X-IA)

😷 Gene therapy trial safety concerns - A fatal adverse event in an early-stage clinical trial of a brain-targeting gene therapy vector has highlighted unresolved safety risks around engineered AAVs, particularly for crossing the blood–brain barrier, and exposed the limits of current preclinical models, prompting renewed calls for caution and better data sharing.

🔥 Community take w/ Felix Raimundo @ Tychobio: “An interesting article on the issues currently faced in gene therapy delivery, and why I am extremely wary of teams working on delivery and AAVs.”

💰 Analysis of Eli Lilly’s tech-like valuation - In-depth analysis explored how Eli Lilly achieved a tech PE multiplier not through rebranding but by moving toward a tech business model with high scalability and subscription-based services. The shift represents a fundamental change in pharma business models. (🙏 Felix Raimundo)

Image, Video And 3D

🎛️ Kling VIDEO 2.6 Motion Control Released - Kling released VIDEO 2.6 with advanced motion control capabilities, copying any action with perfect lip-sync, lifelike motion, and expressive gestures. The model reportedly outperformed Wan 2.2-Animate, Act-Two, and DreamActor 1.5 across all metrics.

🌁 Qwen Image Layered Released - Qwen-Image-Layered is a generative model that decomposes a single input image into multiple RGBA layers so each semantic component becomes independently editable while preserving overall visual fidelity.

👁️ T5Gemma 2 for Next-Gen Image Generation - T5Gemma 2 is a multilingual, multimodal encoder–decoder family (270M, 1B, 4B) that takes text and images as input and generates text, with open weights. It uses tied encoder–decoder embeddings, a merged self/cross-attention decoder, a lightweight vision encoder, and Gemma 3’s long-context attention to support 128K tokens and 140+ languages efficiently.

🔥 Community take: Maybe the grounding for the next generation of image génération models for Gabriel Olympie.

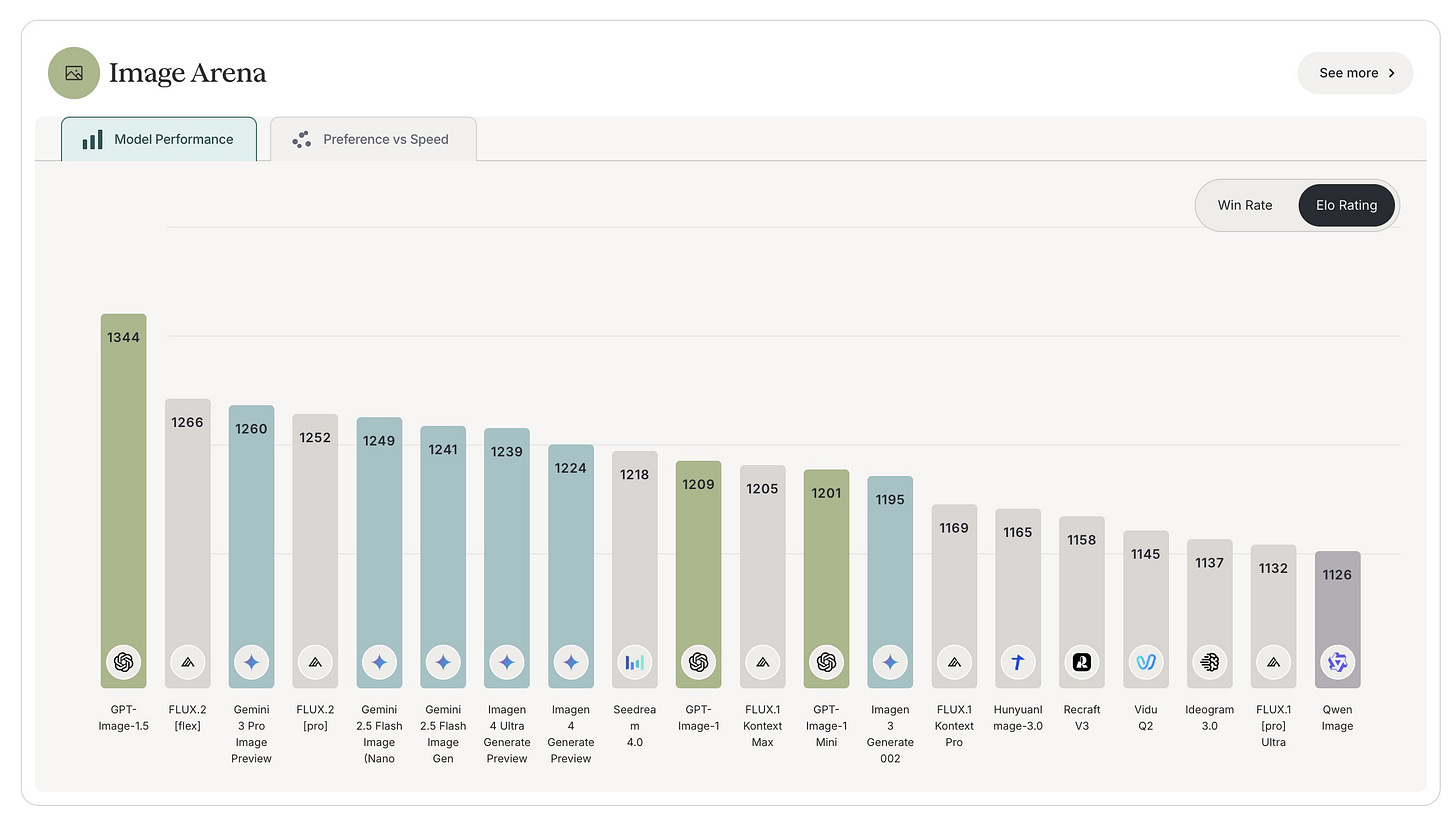

👁️ ChatGPT Images Powered by GPT-Image-1.5 - OpenAI released new ChatGPT Images powered by GPT-Image-1.5, delivering more precise edits, consistent details, and image generation up to 4× faster. The model topped the Artificial Analysis text-to-image leaderboard. (🙏 Alvaro Lamarche Toloza @ Mago, Kevin Kuipers)

🔥 Community take: There is skepticism, with many finding Nano Banana more photorealistic, less “AI-glossy,” and more faithful than GPT models, a view supported by the samples. This highlights a broader issue: image models are often evaluated on quantitative leaderboards like Image Arena, while user perception is qualitative, making such benchmarks poorly aligned with how creatives actually judge results.

Infrastructure

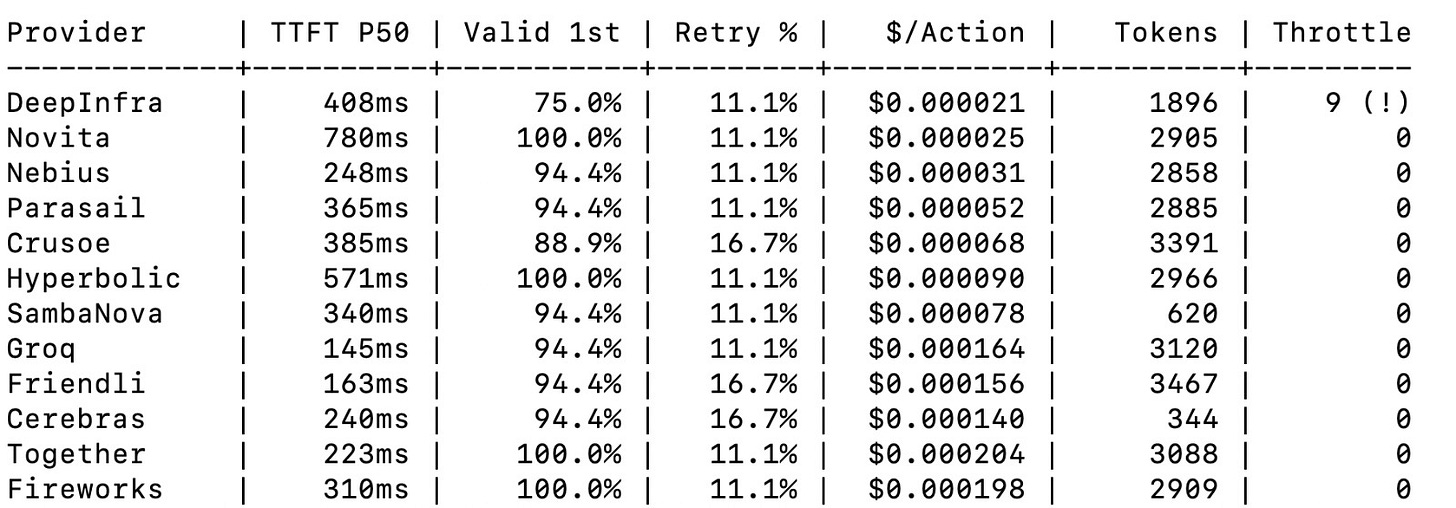

📊 LLM inference provider benchmark results – Tejas Chopra (Netflix) has shared a glimpse of his work in progress on a comprehensive benchmark of 12 LLM inference providers on Llama 3.3 70B using agent-style microtasks. It revealed significant performance differences.

The benchmark focuses on agent-specific behaviors such as routing, tool argument extraction, JSON repair, fan-out and merge, and stop-early token efficiency, rather than traditional benchmarks.

Next step: Tejas plans to rerun the benchmark over a 24-hour window instead of short 10-minute runs to reduce randomness and better observe stability. He will increase concurrency and the number of MCP tools to stress agent workflows and study behavior under heavier load. This is aimed at going deeper into KV cache management, GPU pinning, and scalability limits, and will feed into a more complete blog post with improved plots and analysis.

👉 Complete results soon from Tejas on a blog post!

🔬 FlashAttention and GPU optimization deep-dive - Community member Amine Dirhoussi wrote a comprehensive write-up on FlashAttention and GPU optimization, going beyond the papers to optimize at the PTX level and examine how hardware handles memory access. The work involved learning Triton and extensive profiling to build a rigorous understanding of low-level GPU behavior. (🙏 Amine Dirhoussi @ Quivr)

FlashAttention improves performance by operating on small blocks that fit in fast on-chip memory rather than materializing the full attention matrix.

Naïve Triton implementations remain inefficient due to repeated reads and writes to global GPU memory.

Retaining Q blocks and intermediate results in fast memory while streaming K/V significantly reduces memory traffic.

Careful shared-memory layout is essential, as poor access patterns can still lead to costly bank conflicts.

Language Models

📊 GPT-5.2 analysis: frontier performance with caveats - Comprehensive analysis of GPT-5.2 highlighted model fatigue in the market, strong benchmark performance, cheap base pricing but expensive Pro/Thinking modes, very slow speeds, and rushed release feel. Despite concerns, the model showed stronger reasoning and better reliability on long complex tasks. Community members reported mixed experiences, with some finding sloppy coding work compared to Opus 4.5. On the same topic, GPT-5.2 may ace ARC-AGI reasoning benchmark, though showed less impressive results on other standard benchmarks, raising questions about general capability versus specific task performance. (🙏 Kevin Kuipers, Louis Choquel @ Pipelex)

🧠 NVIDIA Nemotron 3 Family Released - Nemotron 3 is a family of open language models (Nano, Super, Ultra) designed for efficient, high-accuracy agentic, reasoning, and conversational applications, with long-context support up to 1M tokens. The Nano model is released first, offering a 3.2B active-parameter hybrid Mamba–Transformer MoE architecture that outperforms larger open models on benchmarks while being significantly more cost-efficient in inference, along with open weights, training recipes, and accompanying datasets.

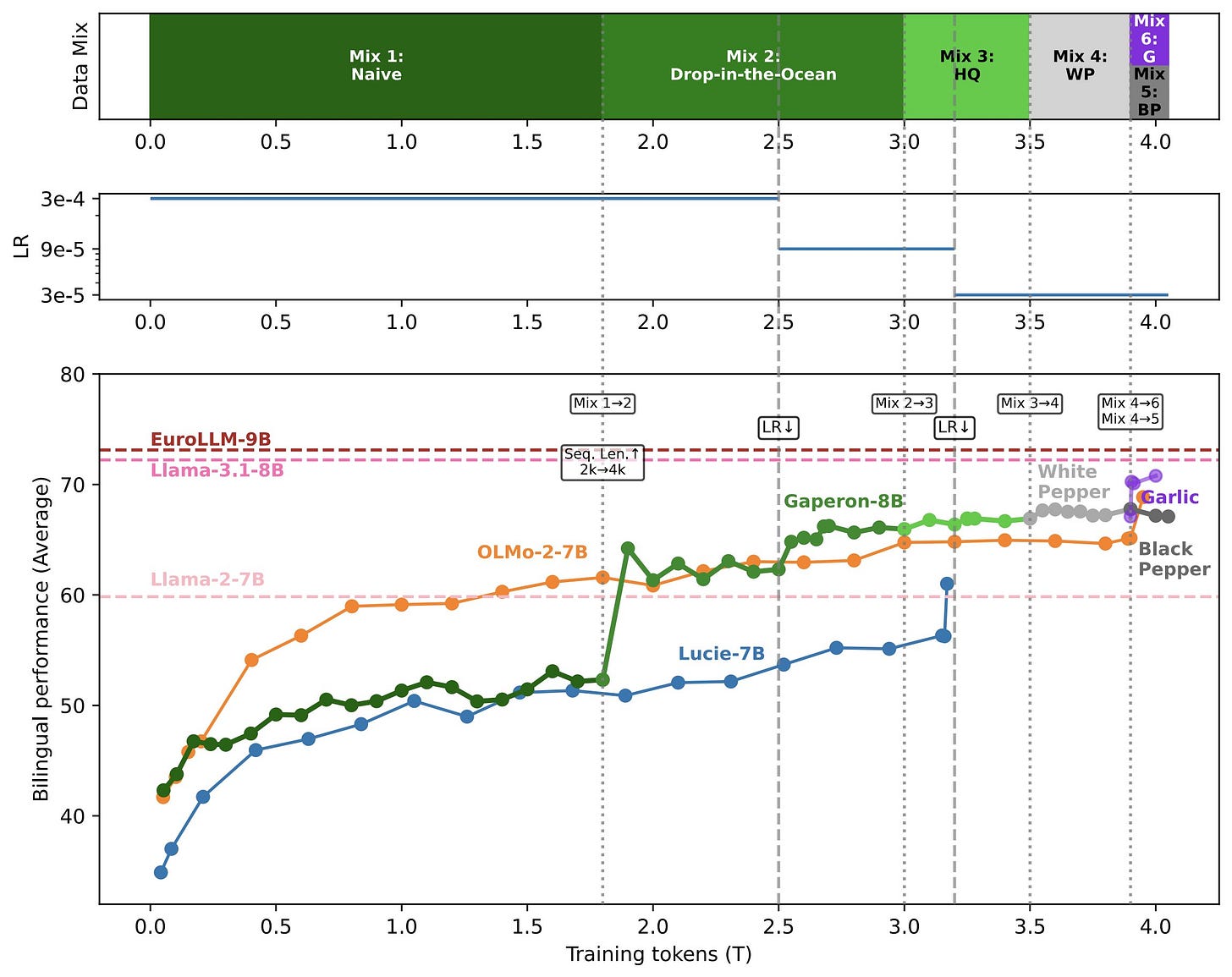

🧠 Gaperon: Open French LLM suite - Community member Wissam Antoun released Gaperon, a fully open-source French LLM suite with models at 1.5B, 8B, and 24B parameters trained on 2-4 trillion tokens of custom data. While not state-of-the-art, it represents the second-best European OSS model of its size, developed by just two researchers. (🙏 Wissam Antoun)

Also:

⚡️ Gemini Flash 3.0 Released - Google released Gemini Flash 3.0 (already mentioned in #AutonomousAgents) with improved cost-performance ratio. (🙏 Gabriel Olympie)

⚡️ Xiaomi MiMo V2 Flash Model - Xiaomi released MiMo V2 Flash, a new model with associated research paper. 🔬 Paper (🙏 Etienne Balit)

🦊 Two Years of AI at Mozilla - Recap of Mozilla’s AI development journey in Firefox, including multiple AI features that run entirely on-device without cloud dependencies. (🙏 Pierre Chapuis @ Finegrain)

Programming

🧑💻 GPT-5.2 in Cursor: Mixed First Impressions - Early testing of GPT-5.2 in Cursor revealed it to be very capable and faster than 5.1 Codex, with excellent capability/cost ratio and nice UI taste. However, Opus 4.5 remained the best in almost all aspects except speed, where Composer 1 excelled. (🙏 Kasra Aliyon @ Kopilo)

🐍 Ty: Extremely Fast Python Type Checker - Astral (creators of uv) released Ty, an extremely fast Python type checker and language server written in Rust, designed as an alternative to mypy, Pyright, and Pylance. The Beta release represents production-ready tooling used exclusively in Astral’s own projects. (🙏 Ihab Bendidi @ Recursion)

Robotic, World AI

🕹️ NitroGen: Foundation Model for Gaming Agents - Researchers introduced NitroGen, an open foundation model for generalist gaming agents. Features include open access to the largest action-labeled gameplay videos with 40K hours across 1,000+ game titles and a universal simulator for measuring cross-game generalization.

🦾 Runway’s GWM-1 for Robotics and Entertainment - Runway’s GWM-1 family of video-generation models respond to user input in real-time while producing scenes that remain consistent with physics laws, applicable to both robotics applications and entertainment.

🦾 Gemini Robotics Policies in Veo World Simulator - Google’s Gemini Robotics team demonstrated a world model for robotics capable of simulating realistic physics and environments for evaluating and training robot policies. The system enables testing robotics behaviors in simulation before real-world deployment.

Other Topics

🧠 Yann LeCun raising €500M at €3bn valuation - Yann LeCun is reportedly raising €500M at a ~€3B valuation for his new startup Advanced Machine Intelligence (AMI), launching in early 2026 and focused on building world models. Alex LeBrun, formerly of Nabla, has been appointed CEO, alongside an exclusive AMI–Nabla partnership to advance agentic healthcare AI. (🙏 Pierre Chapuis)

🎙️ French Podcast on AI competition - New French podcast episode discussed the shift from “who has the best algorithm?” to “who has the best compute factory?” era, exploring how compute became a strategic advantage comparable to electricity in the 20th century. (🙏 Gawen Arab @ Airbuds)

Out Of Context

🤭 LLM rivalry thread - Humorous Reddit thread showed Gemini’s response after being shown what ChatGPT said about its abilities, sparking community amusement. (🙏 Pierre Chapuis)

Job Board

🇳🇱 Founding Product Engineer at Moyai - Amsterdam-based company seeking founding product engineer to join agent reliability engineering platform. Moyai helps agent builders monitor observability logs for anomalies and fix failing agents in production, positioning as Sentry/Incident.io for AI agents. On-site position. (🙏 Robert Hommes)

🇳🇱 Founding AI Engineer at Moyai - Amsterdam-based Moyai also seeking founding AI engineer for agent reliability engineering platform. On-site position. (🙏 Robert Hommes)

🌍 CTO/Head of Engineering at MAGE-X – to define an HDS-compliant architecture, lead AI and data strategy, and handle regulatory requirements (MDR, HDS, GDPR). The role involves building the engineering team for a platform turning unstructured medical records into actionable data for clinical research. Based in Marseille (hybrid) or fully remote, with equity.

New Members

🇫🇷 Ihab Bendidi (Recursion) - Postdoctoral Researcher at Recursion Pharmaceuticals working on foundational models and multimodal deep learning for biological modalities. With 7 years as a developer and 5 as a researcher, focuses on imaging and gene expression data. (🙏 Ihab Bendidi)

🇳🇱 Anthony Diaz (Orq) - Welcomed to the community on December 13. (🙏 Anthony Diaz)

🇬🇧 Carlos Musoles (Kalavai) - Founder and CEO at Kalavai, giving AI teams GPU superpowers for affordable, flexible AI workflows. (🙏 Carlos Musoles)

🇫🇷 Alvaro Lamarche Toloza (Mago) - Co-founder & CEO at Mago, building AI-powered video transformation and stylization. Worked in games, 3D animation and VFX for years, deep into image and video models and creative tech. (🙏 Alvaro Lamarche Toloza)

Couldn't agree more. Modular skills improve agent robustness significantly.