Your Dose of Reg.exe, Week {19}

Release of GPT-5.1 with better instruction following. World Labs debuts Marble, a 3D world model. Gene-editing tensions grow as startups push limits and FDA reacts. Grid feels phantom data centers.

Events

🇺🇸 San Francisco Engineering Night (November 18) - Dust is organizing an engineering night in San Francisco with Corey Ching from OpenAI and Shaheen Lavie-Rouse from Eleven Labs alongside Jules Belveze from Dust.

🇺🇸 NeurIPS 2025 - Emmanuel Benazera will attend the event and would be happy to meet other members in a small gathering around San Diego or San Francisco.

🌍 UN B-Tech project collaboration opportunity - The UN Office of Human Rights has a B-Tech project addressing guidance and peer-to-peer exchange at policy level, seeking connections with European AI companies for discussions. 👉 More info or contact directly Anselme Trochu)

Autonomous Agents

🎛️ Arazzo specification for AI-ready APIs - OpenAPI (with a P!) is not enough for making APIs usable by AI agents. Arazzo enables clear, actionable workflows so agents and developers can understand and use APIs more effectively.

🤖 Kaggle’s Introduction to Agents whitepaper - Kaggle published a comprehensive guide on AI agents, including interesting mention of Level 4: self-evolving systems. (🙏 Anselme Trochu)

Biotech, Health, and Chemistry

🧬 Genetic engineering ethics discussion - A recent WSJ article mentions a wave of Silicon Valley–backed startups pushing toward genetically engineered embryos despite U.S. bans and major ethical concerns. These companies aim to prevent hereditary diseases and, in some cases, enhance traits such as intelligence, height or disease resistance, while critics warn of unpredictable science, eugenics risks and a lack of regulatory oversight. The article states: “One plan Armstrong floated, according to people he has talked to, was for a venture to work in secret and reveal a healthy genetically engineered baby before the scientific and medical establishment had a chance to object—a leap meant to shock the world into acceptance.”

A pattern mirrors generative AI, noted by a member of the community: “despite laws against copyright violations, actors can quietly break the rules, gain power, and later pay their way out of trouble, as illustrated by the Anthropic book-piracy lawsuit. Current laws are effectively useless in this dynamic. Either we accept that reality, or we update regulations so penalties massively outweigh the benefits and genuinely deter this behavior.” (🙏 Fabien Niel, Pierre Chapuis, Jeremie Kalfon)

🔬 FDA’s new pathway for custom gene editing - FDA unveiled the “plausible mechanism pathway” to accelerate bespoke gene editing therapies, potentially triggering significant shifts in personalized medicine regulation. (🙏 Felix Raimundo)

Computer Vision

🙌 Photoroom open-sources text-to-image model - Photoroom released their text-to-image model and detailed the development process behind it. (🙏 Emmanuel Benazera @ Jolibrain)

PRX is fully open-source with accessible code and weights.

The team shares both successes and failures to improve transparency.

Several model variants (base, SFT, distilled) and image sizes (256, 512, 1024px) are released.

They publish a research series detailing experiments, benchmarks, and lessons learned.

🌎 World Labs releases Marble multimodal world model - World Labs launched Marble, their frontier multimodal world model designed for spatial intelligence and 3D world reconstruction, generation, and simulation. (🙏 Kevin Kuipers @ Galion.exe)

The model generates and edits 3D worlds with fine-grained control, and exports to Gaussian splats, meshes, or video.

It supports simple prompts for quick creation, and multi-image or video prompts for precise, angle-specific control.

Users can edit objects, change visuals, or restructure scenes interactively in 2D and 3D, with Chisel providing experimental 3D sculpting that separates structure from style.

Worlds can be expanded or composed into larger environments and exported for use in external applications or enhanced videos.

Language Models

🥳 GPT-5.1 released with improved instruction following - OpenAI launched GPT-5.1 with a focus on chattiness and personalization rather than pure benchmarks, including better adherence to formatting preferences such as the infamous em-dash usage. No real community feedback yet, but the model already seems acclaimed for its coding capabilities. (🙏 Enrico Piovano @ Goji)

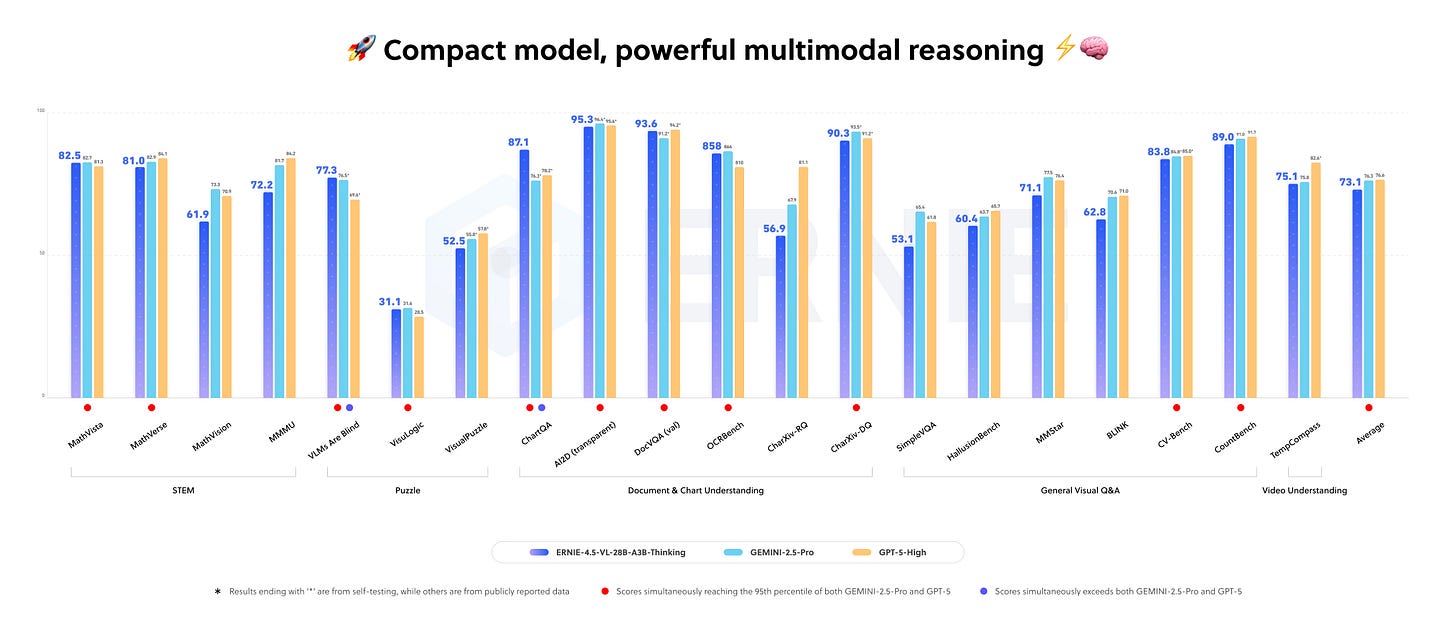

🇨🇳 ERNIE-4.5-VL-28B-A3B-Thinking model - Baidu released a new 28B parameter VLM with thinking capabilities, though requiring 80GB GPU memory.

Only 3B parameters are used at inference, enabling strong and efficient performance.

The model excels at visual grounding, multi-step reasoning, and tool use.

Fine-tuning is supported and commercial use is allowed under Apache 2.0

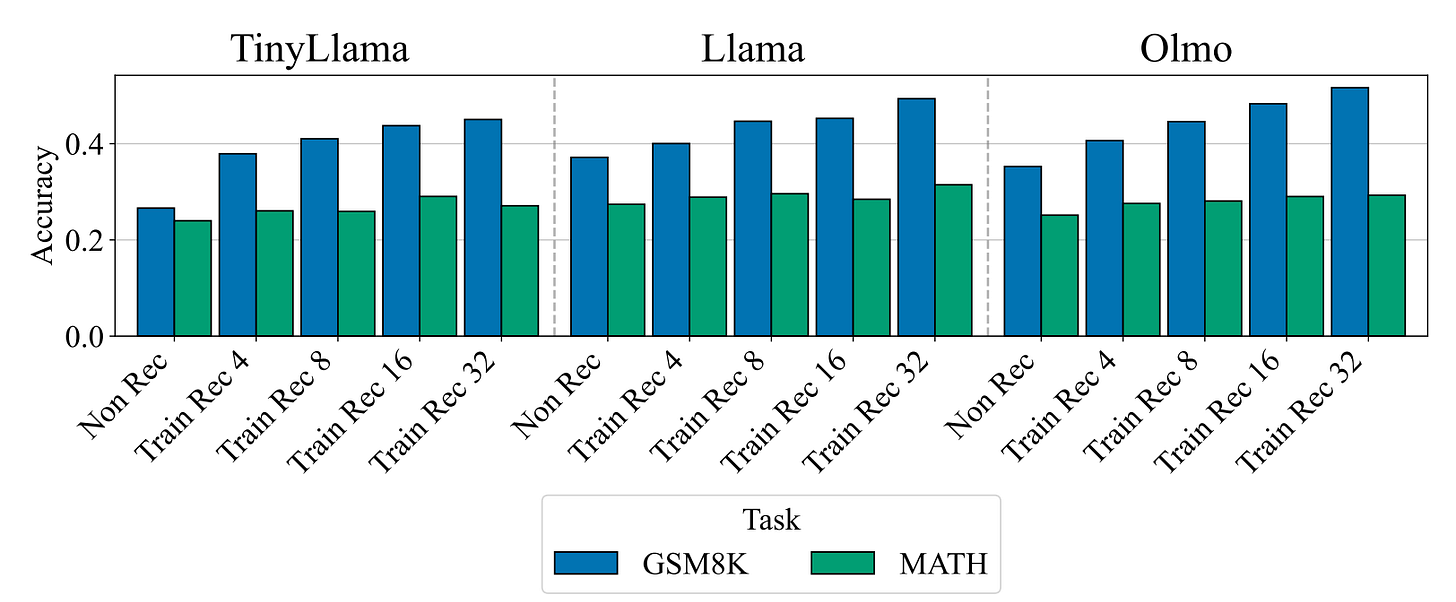

⚙️ Retrofitted Recurrence for deeper thinking - Research showed how to retrofit existing pretrained models to add deep reasoning or recurrence layers after training with minimal extra cost or retraining.

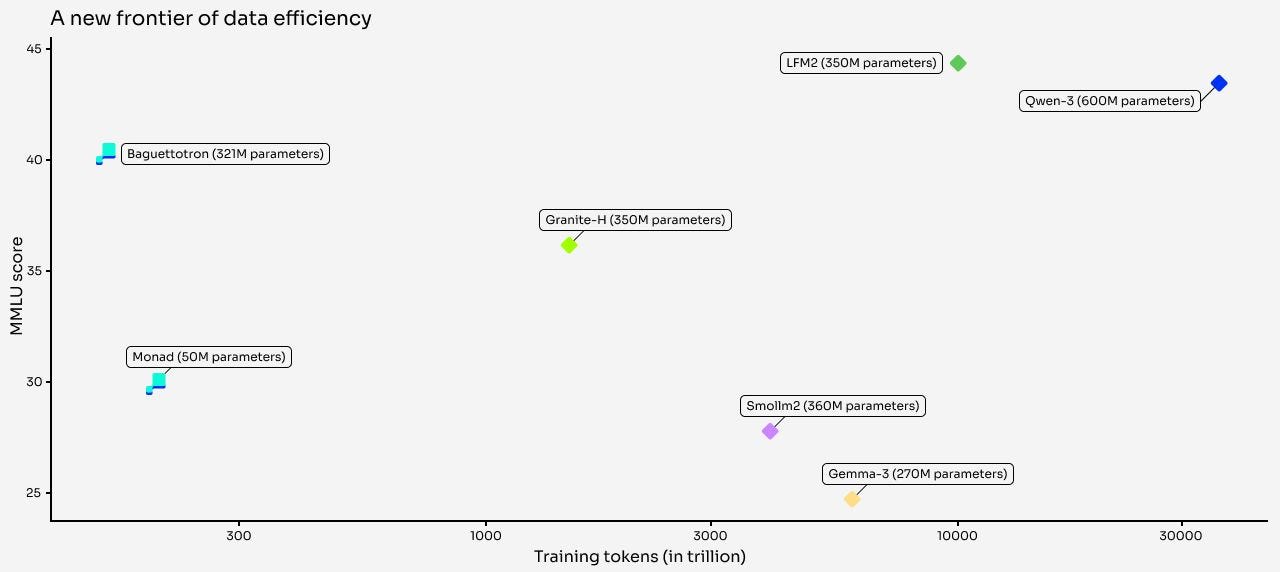

🥖 Pleias releases SYNTH reasoning dataset - Pleias launched SYNTH, a fully synthetic reasoning dataset, with two SOTA models trained exclusively on it. Their 321M model achieved best-in-class performance across MMLU, GSM8K, and HotPotQA using 10-50x less data than comparable models.

Models trained on SYNTH achieve state-of-the-art results with far less data and compute.

SYNTH uses Wikipedia vital articles as seeds, expanded into diverse tasks and languages.

Small models like Baguettotron and Monad show early emergence of reasoning skills due to dense synthetic training.

Synthetic context engineering is key, shaping data for more effective model training.

⚙️ Meta’s RL scaling research - Meta published “The Art of Scaling Reinforcement Learning Compute for LLMs” providing insights on scaling RL for large language models. (🙏 Sophie Monnier)

🧠 Google’s Nested Learning paradigm - Google Research introduced Nested Learning, a new ML paradigm for continual learning addressing catastrophic forgetting in neural networks. (🙏 Gabriel Olympie)

“We believe the Nested Learning paradigm offers a robust foundation for closing the gap between the limited, forgetting nature of current LLMs and the remarkable continual learning abilities of the human brain.”

Nested Learning treats ML models as sets of nested optimization problems, not just one big process.

Architecture and optimization are unified as multi-level processes.

Memory updates happen at multiple frequencies for continuum learning.

Experiments show Hope outperforms current models on language/reasoning tasks.

🧠 Provable, Scalable Self-Supervised Learning Without Heuristics - New paper from LeCun at FAIR on understanding latent space regularization and self-supervised learning. (🙏 Gabriel Olympie)

LeJEPA is a new approach to self-supervised learning that avoids common heuristic tricks in existing methods.

It uses a mathematical principle to keep learned features balanced and diverse, improving model robustness and stability.

The method is simple to implement, scales efficiently, and doesn’t need complicated architecture tweaks or large resource requirements.

👷♂️ Structured data extraction tools discussion - Community discussion on current SoTA for structured data extraction, with Mistral’s Document AI praised as one of the best. Were mentioned .txt, Docling, Azure OCR, and Reducto. (🙏 Youssef Tharwat, Anselme Trochu, Kevin Kuipers)

MLOps

📊 Weights & Biases terminal UI - W&B introduced LEET (Lightweight Experiment Exploration Tool), a beautiful terminal interface for tracking ML training runs with real-time stats, metrics, and system health visualization.

Infrastructure

👻 “Phantom” data centers affecting US power forecasts - Analysis of how developers overstate energy needs and keep non-viable data center projects alive, muddying forecasts for US power requirements. (🙏 Margaux Wehr)

Developers are overstating data centre energy needs with duplicate or non-viable projects.

Utilities and regulators are tightening rules to eliminate “phantom” data centres.

Inflated forecasts risk overbuilding and higher costs for consumers.

Forecasting inaccuracies may still lead to a mismatch between built infrastructure and real data centre demand.

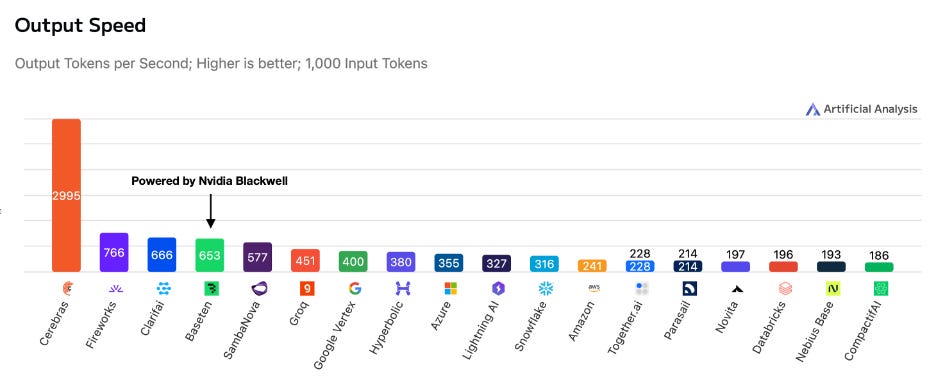

📊 Cerebras outperforms Nvidia Blackwell - Cerebras published benchmarks showing their inference performance beats Nvidia’s Blackwell GPUs for AI workloads. (🙏 Sophie Monnier @ X-IA)

Programming

🤖 Astro framework gaining adoption - Community discussion on using Astro for web development, with positive feedback on its developer experience compared to template services like Webflow/Framer. Featured stack includes Astro + SolidJS + UnoCSS. (🙏 Robert Hommes, Benjamin Trom, Youssef Tharwat)

🧑💻 Crush TUI coding agent for Neovim - Charm released a terminal-based coding agent that works within Neovim, offering an alternative to GUI-based coding assistants.

Also:

🤖 JSON Agents specification - New universal JSON specification for describing AI agents in a portable, schema-driven format with standardized capabilities and runtime descriptions. (🙏 Anselme Trochu)

🛠️ Claude reverse-engineering tool - A Claude skill that lets Claude reverse-engineer itself using mitmproxy to inspect system prompts and tool definitions. (🙏 Charly Poly @ Inngest)

Community

🍕 In our last TechLunch with Arnaud Porterie (Vibe.co), Enrico Piovano (Goji), Frédéric Barthelet (Alpic), Louis Choquel (Pipelex), Pierre Chapuis (Finegrain), Stephan von Perger (Anthropic), Suraj Patil (Black Forest Labs), Thomas Payet (Meilisearch), Kemal Toprak Uçar (Numberly) and Xavier Grand (Algolia), we discussed the challenges of hybrid search, the move toward running models directly in the browser, and the strengths and limits of diffusion-based coding copilots like Mercury. We also examined why fully AI-generated feature films remain out of reach, with consistency, control and audience acceptance still being major obstacles.

New Member

🇫🇷 Manfred Touron (Gno.land) - CTO at Gno.land, a decentralized smart contract platform for social coordination. Previously co-founded Scaleway (Cloud) and Berty (P2P messaging). Has contributed to 900+ open-source projects with a 3,000+ day GitHub streak. Special power: shipping prototypes before most people schedule their first sync.