Your Dose of Reg.exe, Week {12}

Reg.exe is a global, closed community of 260+ engineers, founders, and researchers in AI—from San Francisco to Tokyo. Each week we share discussion highlights in a short newsletter.

👉 What is Reg.exe and how to join.

Events

🇧🇪 FOSDEM 2026 - Call for participation published for Europe’s largest Open Source conference, taking place in Brussels on January 31st & February 1st. Submissions now open for devrooms and main track talks. (🙏 Pierre Chapuis)

🇬🇧 Engineering Night #7 - Jules and Vincent will speak alongside Anthropic team members on October 7th, focusing on MCP technical discussions. 🎭 Event registration (🙏 Jules Belveze @ Dust)

🌎 5G security vulnerabilities - Top 5G security vulnerabilities based on real-world penetration testing insights from P1 Security (🙏 Philippe Langlois).

📆 October 22nd — Register for webinar

General

🛸 SOTA Initiative expansion - Reg.exe will be included in the SOTA umbrella initiative, with weekly newsletter content now published at We <3 SOTA. The platform brings together Tech Founders, Engineers, Researchers and VCs passionate about AI innovation. (🙏 Kevin Kuipers @ SOTA / Reg.exe)

Ask for Help

🧠 Generative Episodic Memory project - Louis is building a neuroscience-inspired memory system that stores compressed latent traces instead of full data, using a small LLM to reconstruct scenes. The system includes frozen embeddings, Residual LFQ compression, and SmolLM2 with RL training. Seeking collaborators with expertise in rate-distortion theory and RLHF/PPO.

👉 Contact Louis Manhes

👩💻 CtrlG platform - Paco Villetard’s new platform provides high-quality code training data for frontier reasoning, featuring a global community of over 10,000 software engineers creating carefully written and annotated code datasets.

🤖 Multi-agent orchestration discussion - Gilles seeks perspectives on guided multi-agent system orchestration, noting limitations with CrewAI’s low-level approach and lack of expressive flow modeling for complex processes like while loops.

👉 Contact Gilles Seghaier

Achievements

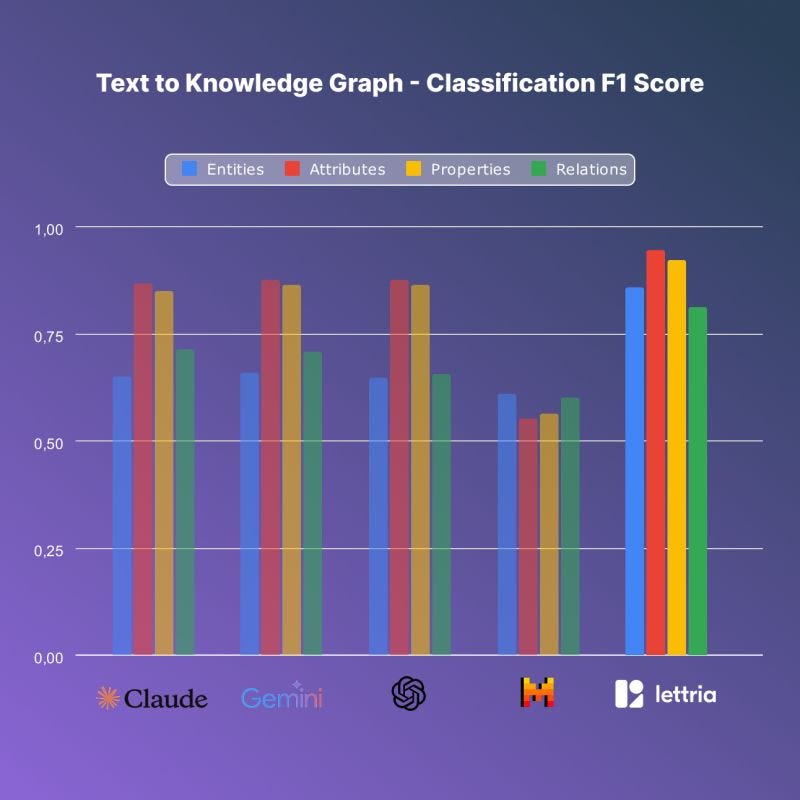

🔥 Lettria Perseus model - Text2Graph fine-tuning model that outperforms advanced proprietary LLMs by over 30% on Knowledge Graph construction tasks. The smallest version could run on mobile devices while surpassing larger models. (🙏 Charles Borderie @ Lettria)

Perseus outperforms leading LLMs: 30% better accuracy, 400x faster speed for attribute extraction.

Schema-valid outputs: Delivers up to 100% reliability with minimal manual correction needs.

Open-source fine-tuning wins: Smaller, targeted models beat big proprietary ones on domain tasks.

Real-time, private: Ultra-low latency, self-hosting option keeps sensitive data secure.

🏆 Pleias 1.0 launch - Ivan’s team released the first family of language models trained on fully open data, documenting 2 trillion tokens under permissive licenses. The scientific article details the complete behind-the-scenes process.

The models use a new, multilingual dataset called Common Corpus, built from literature, legal texts, scientific papers, Wikipedia, and open-source code.

Three model sizes were trained (350M, 1.2B, and 3B parameters) using modern architectures and efficient open-source tools for low carbon impact.

Despite their modest size, the RAG versions of Pleias 1.0 outperform much larger models on many RAG and multilingual benchmarks.

Pleias 1.0 excels in multilingual performance, adhering to at least eight European languages and maintaining language consistency better than competitors.

All models, code, and data are openly released, setting a reproducibility and compliance standard for transparent, trustworthy AI research.

🩻 Raidium: Curia radiological model - Raidium released their state-of-the-art radiological foundation model, trained on 130TB of CT scans and MRIs. The model reaches radiologist-level performance on 19 tasks and is available to the research community. The model performs well on semantic segmentation tasks and could potentially handle fetal segmentation applications. (🙏 Pierre Manceron)

Curia generalizes across both CT and MRI modalities, even without paired training.

Outperforms BiomedCLIP and MedImageInsight in organ classification accuracy and modality adaptation.

Strong few-shot learning: high accuracy with minimal labeled examples.

Delivers better survival predictions and image registration than standard approaches, aiding clinical planning.

Integration into workflows via a promptable PACS viewer, with ongoing expansion to include electronic health records and natural language support.

A lightweight Curia-B model and 19-task benchmark are made public for broader research use.

Knowledge

Artem Kirsanov YouTube channel - A goldmine channel for computational neuroscience and bio-inspired architectures, featuring content from a Harvard neuroscience PhD student exploring machine learning topics. (🙏 Louis Manhes @ Genario)

AI Safety and Ethics

DeepMind safety framework - New version 3.0 of AI Frontier Safety Framework released, exploring the perils of “misaligned” AI and providing updated guidelines to prevent problematic AI behavior. (🙏 Kevin Kuipers)

DeepMind warns misaligned AI could be used to create malware or biological threats.

Protecting AI model weights is crucial to prevent disabling safety measures.

Manipulative AI is possible, but current risks remain slow and manageable.

Future advanced AI may become uncontrollable, with no clear solution yet.

Computer Vision

👁️ Qwen 3 VL release - New vision-language model launched with enhanced capabilities for image and video understanding tasks. (🙏 Pierre Chapuis)

Qwen3-VL excels at vision-language tasks, beating Gemini 2.5 and GPT-5 on key benchmarks.

Acts as a real agent: operates GUIs and automates tasks visually.

Handles 256K+ tokens, OCR in 32 languages, and long documents.

Top performance in code generation and STEM/math reasoning.

Advanced spatial and document understanding, including 3D localization.

Cyber

Claude browser safety warnings - A pretty scary disclaimer in the Claude Chrome extension that outlines the limitations of its safeguards. (🙏 Kevin Kuipers)

Datastacks

Kaggle Grandmasters techniques - NVIDIA’s comprehensive guide covering 7 battle-tested modeling techniques for tabular data, emphasizing extensive exploration, diverse baselines, feature engineering, and pseudo-labeling strategies for competitive machine learning. (🙏 Kevin Kuipers, Kemal Toprak Uçar)

More iteration → more patterns, faster detection of failure, drift, or overfitting.

Use k-fold CV; without a trustworthy validation score, you’re flying blind.

Missing values and correlations are table stakes—dig deeper for hidden signals.

Test linear models, GBDTs, and small nets side by side for context.

Hill climbing and stacking often outperform any single model.

Scaling feature creation can surface hidden signals models can’t find alone.

Turn unlabeled data into signal; soft labels add robustness.

Extra runs of trusted models boost robustness and squeeze out performance.

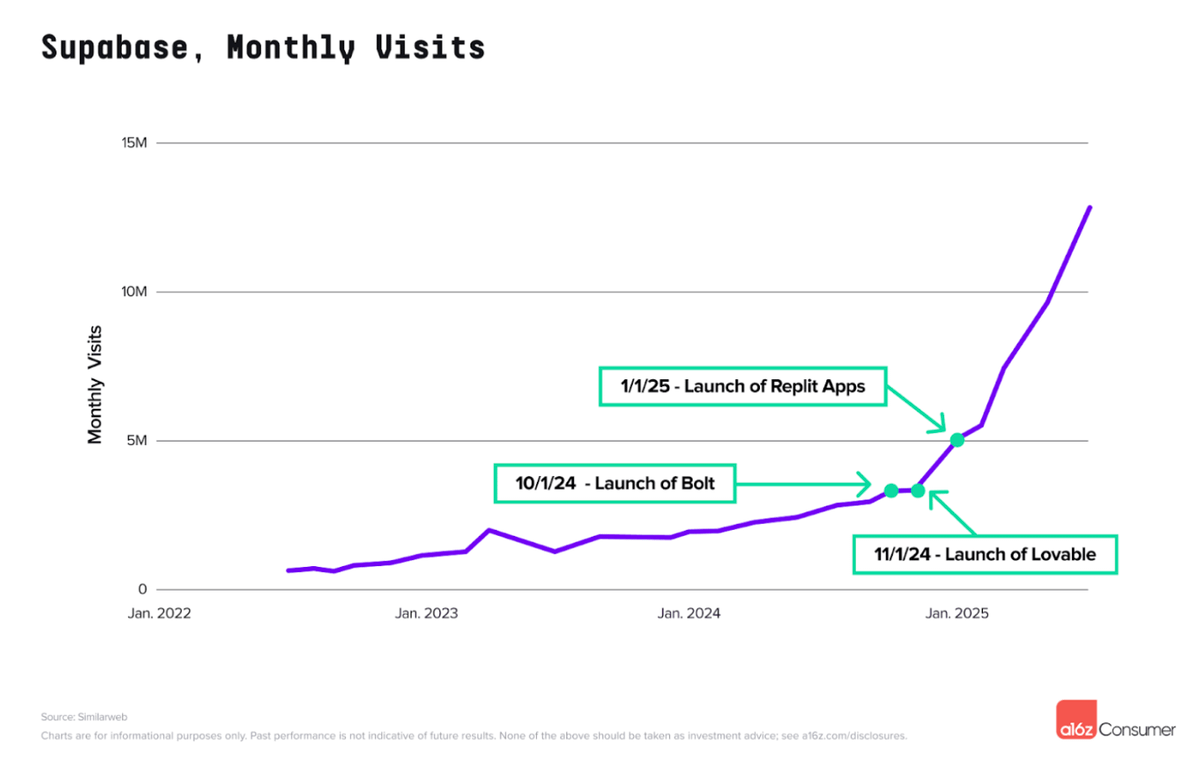

Supabase traffic surge - Explosion of Supabase usage due to “vibe coding” apps like Lovable and Replit recommending it as a backend database. Despite being around for 5 years, traffic skyrocketed with the rise of AI coding tools. (🙏 Dario Di Carlo @ Bricks.sh)

Infrastructure

httpjail project - New cross-platform tool for monitoring and restricting HTTP/HTTPS requests from processes using network isolation and transparent proxy interception.

Language Models

💪 OpenAI reinforcement fine-tuning analysis - TensorZero’s comprehensive evaluation showed RFT improved agentic coding tasks with just 2 training conversations but at 100-700x the cost of supervised fine-tuning. For data extraction, SFT achieved better results at 159x lower cost. (🙏 Kevin Kuipers)

At 100-700x the cost of supervised fine-tuning, we only observed RFT to deliver clear wins on agentic coding tasks. Data extraction performed better for less with SFT, and customer service actually got worse with RFT. The technology shows promise. It gives engineers flexibility in reward design through flexible grader configurations and the performance gains can be substantial when it works. But between the prohibitive costs, content moderation hurdles, and limited applicability, RFT is a difficult choice to justify.

Qwen3Guard models - New multilingual guardrail model series with three different sizes, claiming superior performance over other guard models for content safety. (🙏 Kemal Toprak Uçar)

Also:

Helix Parallelism research - New approach to sharding strategies for interactive multi-million-token LLM decoding, addressing bottlenecks in Feed-Forward Network weights and KV cache access.

DeepSeek-V3.1 on AWS - Model now available as fully managed service in Amazon Bedrock, featuring hybrid open weight architecture with thinking/non-thinking modes.

RL efficiency analysis - Toby Ord’s analysis on reinforcement learning inefficiency for frontier models, showing 1,000-1,000,000x reduction in information learning per training hour.

Autonomous Agents

🎛️ Qodo AI’s open-aware - Deep codebase intelligence MCP leveraging a context engine, designed to act as an “Agentic Principal Engineer” for complex codebases. (🙏 Nnenna Ndukwe)

Robotic

VLA leaderboard - HuggingFace’s LeRobot team launched new Vision-Language-Action model leaderboard with simulation evaluations on LIBERO benchmarks. (🙏 Harsi Singh Sandhawalia @ Yaak)

Gemini Robotics 1.5 - Google DeepMind released agentic capabilities enabling robots to use digital tools, search for information, and solve complex multi-step tasks like sorting objects based on local recycling guidelines (🙏 Kevin Kuipers)

Also:

Wuji robotic hand - Chinese startup revealed advanced robotic hand with 20 degrees of freedom, 600-gram weight, and million-cycle durability, showing remarkable dexterity in demonstration videos.

Programming

🤖 Claude Code analysis - “How Claude Code works and why it matters” explores the architecture of the tool, and how it should impact how we use it. (🙏 Eldar Akhmetgaliyev)

🤖 GitHub Copilot CLI - Now in public preview, allowing developers to build, debug, and deploy with GitHub Copilot coding agent directly from the terminal. (🙏 Kevin Kuipers)

You can now use Copilot’s coding agent directly from your command line, without switching context.

Easily access repositories, issues, and pull requests via natural language with your GitHub account.

The CLI agent can build, edit, debug, and refactor code—planning and executing tasks for you.

Ships with GitHub’s MCP server and supports custom MCP servers to extend Copilot CLI’s abilities.

Every action is previewed before running—nothing executes without your approval.

Also:

Kimi’s OK Computer - Agent mode for building multi-page websites, mobile-first designs, interactive dashboards from data, and self-scoping project management.

HTMX Critique - Despite the article’s author having a bad experience, Pierre shared his positive take on using HTMX for non-SPA websites. (🙏 Pierre Chapuis)

CodeRabbit CLI - Yet another AI code reviews in command line interface with context-aware feedback, line-by-line suggestions, and real-time chat capabilities.

Out-Of-Context

Rabbit R1 evolution - Impressive new app creation feature where users can vocally describe an app and receive a custom application within minutes through precise conversational refinement. (🙏 Emmanuel Benazera)

🙌 New member

🇫🇷 Nils Layet (Koyeb) - Software Engineer at Koyeb, a cloud infrastructure provider. Based in France, he specializes in TypeScript, Node, React, and Tailwind. He can plug USB cables quite fast and enjoys climbing, electronic music production, craft beers, and video games.