Your Dose of Reg.exe, Week {11}

AI x Oncology & Gene Editing in Paris, new ARC-AGI record, DeepSeek & Mistral retrospectives, open letter to Microsoft, IBM Granite-Docling, Anthropic agents, GPT-5 Codex, PostgreSQL 18 & more.

Reg.exe is a global closed community of 260+ engineers, founders, and researchers interested in AI innovation, from San Francisco to Tokyo. Each week, we share the highlights of our discussions in a newsletter. If you’d like to join, write to join@welovesota.com

Events

🇫🇷 AI x Oncology & Gene Editing event in Paris (🙏 Willy Braun @ Galion.exe)

🩻 AI × Oncology (7:00-7:45pm) showcasing Spotlight Medical and OneBioscience.

🧬 Gene Editing: Human vs Plant (8:00-8:45pm) featuring EPICS Biotechnology and BrinkTx pushing CRISPR frontiers.

📆 October 30, (7:00-9:30pm CET) 👉 Free registration available

Job Offers

.omics is building foundation models for plant biology, transforming genomic and multi-omics data into the next generation of tools for trait discovery and predictive breeding. Their goal is to develop crops that can better withstand pests, viruses, and climate stress, helping agriculture adapt to the challenges of a changing environment.

They have just opened 2 positions:

🔥🔥🔥 It’s an amazing project and team. Spread the word!

Knowledge

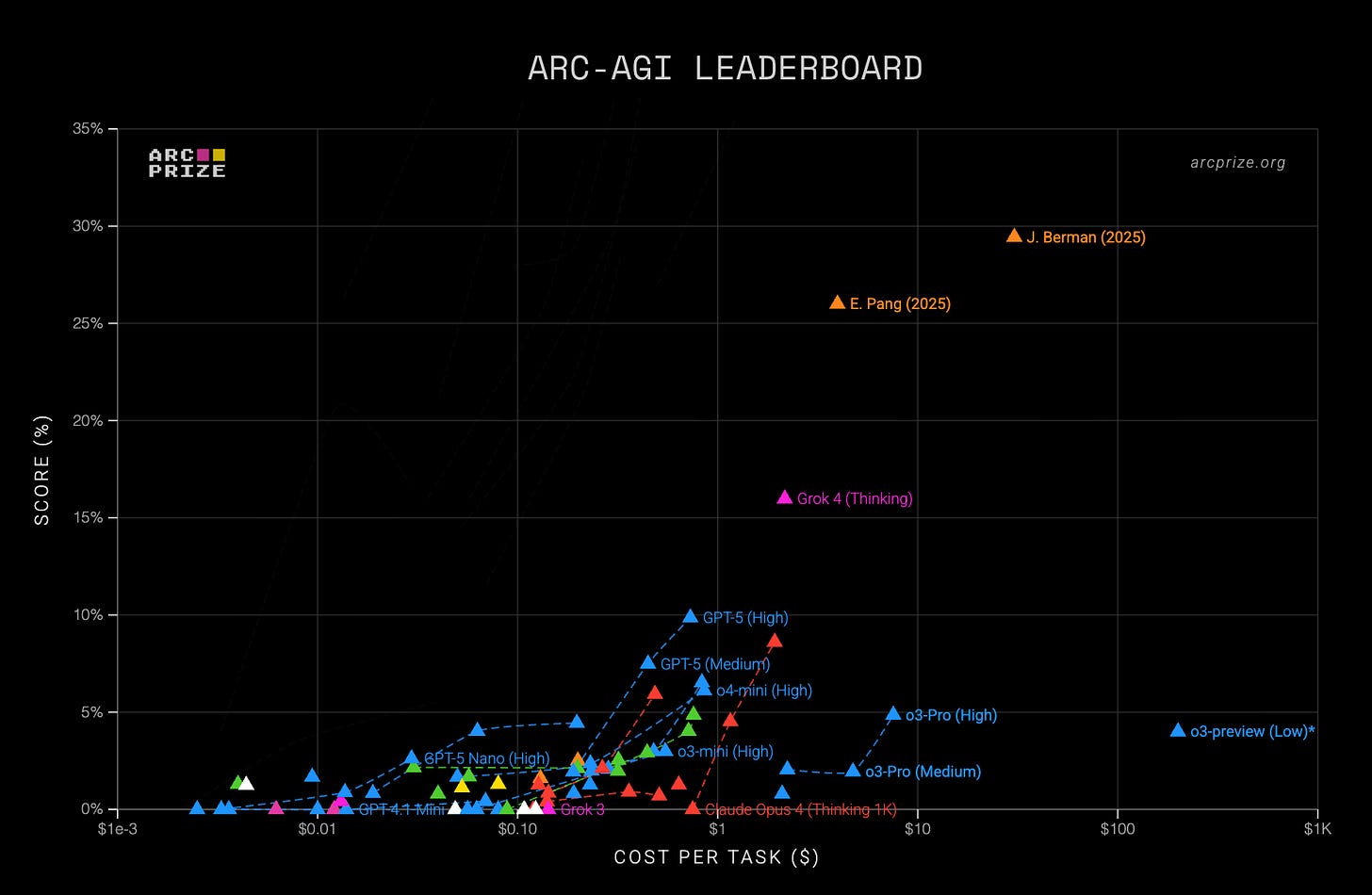

🤯 New SoTA on ARC-AGI achieved using Evolutionary Test-Time Compute with Multi-Agent Collaboration. Read the full analysis (🙏 Louis Manhes @ Genario)

ARC-AGI is the unique benchmark for evaluating general intelligence in AI, testing abstract pattern recognition and the ability to generalize to novel tasks.

Breakthrough was done by using plain English instructions with Grok-4 and multi-agent revision strategies (individual and pooled) rather than Python code.

Jeremy Berman has achieved 79.6% on ARC v1 at exceptionally low cost ($8.42/task) and set a new record of 29.4% on ARC v2, surpassing previous models by a significant margin.

Jeremy argues true AGI will come from embedding logical reasoning directly into the model’s training so that consistent, universal deduction becomes possible.

General

📚 AI book recommendations discussion sparked by Paolo Perrone's analysis of worthwhile AI books versus $427 in refunds. Chip Huyen's AI Engineering and Designing Machine Learning Systems received particular praise for practical insights. (🙏 Julien Mangeard @ Plakar, Kemal Toprak Uçar @ Numberly)

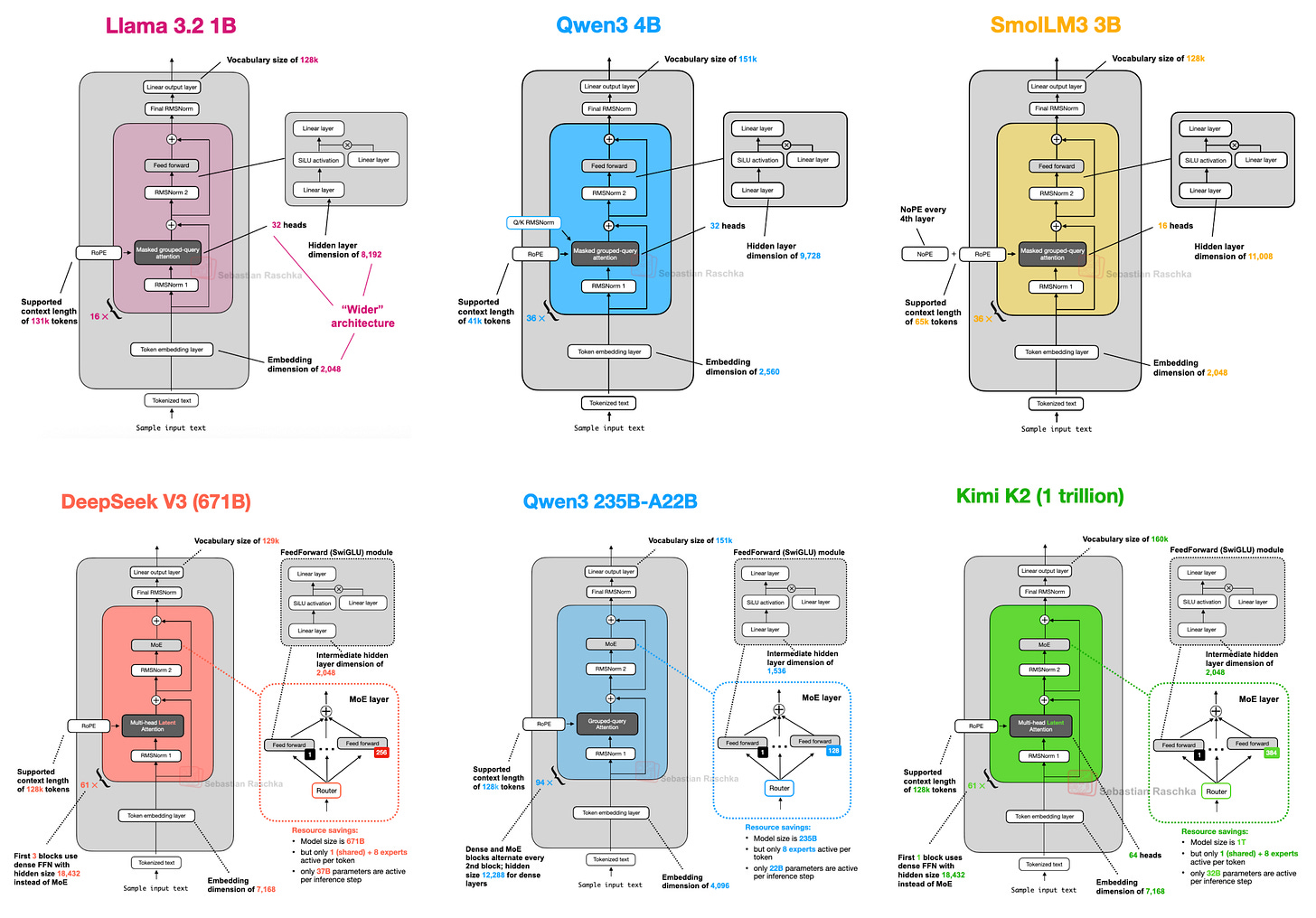

🧠 Seven Years of Transformers retrospective analyzes how DeepSeek, OLMo, Gemma, and Mistral demonstrate where LLM architecture is truly evolving versus where it is only incremental improvement. (🙏 Willy Braun @ Galion.exe)

Despite major leaps in LLM capabilities since the original GPT, models in 2025 remain structurally very similar to the original transformer.

There is an ongoing trade-off between architectural sophistication and raw compute performance. Architectural choices usually reflect labs’ strategic priorities and target audiences: model architecture is as much about product positioning as it is about performance. A few examples:

Some focus on memory efficiency (DeepSeek’s use of MLA and MoE), while others emphasize transparency (OLMo 2) or device compatibility (Gemma 3n).

Mistral Small 3.1 achieves lower latency by relying on highly optimized, simpler architectures while sacrificing some advanced attention mechanisms, whereas models like Gemma 3 prioritize memory efficiency through sliding window attention.

Scale isn’t everything, and pattern matters more than size. A comparison between Qwen3 (0.6B parameters) and Kimi K2 (1T parameters) suggests that intelligence and performance may not correlate directly with model size. This supports the view that intelligence in LLMs may depend more on pattern and structure than on parameter scaling alone, echoing the fractal-like properties found in neural architectures.

Computer Vision

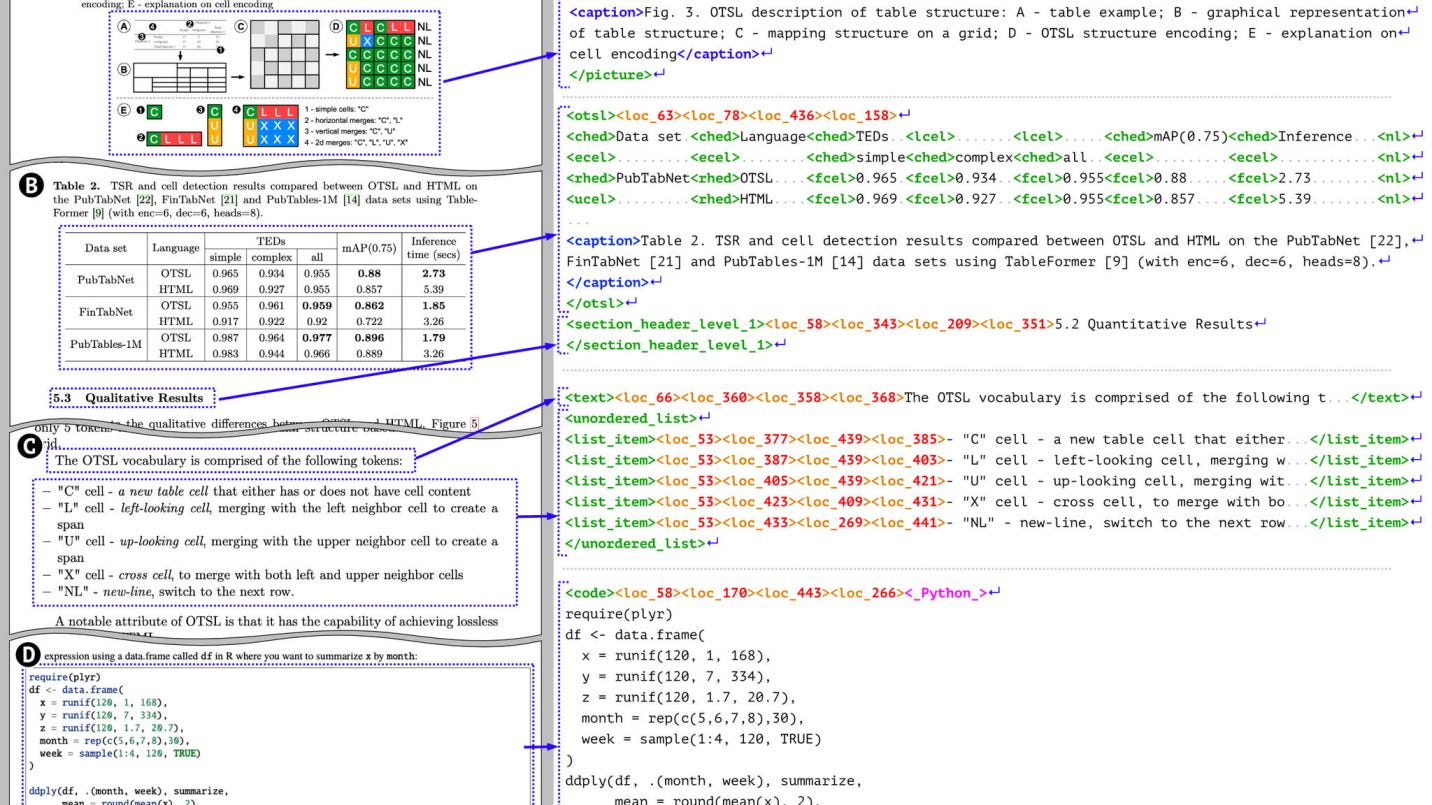

🐥 IBM released Granite-Docling-258M, an ultra-compact 258M parameter vision-language model for end-to-end document conversion. The model preserves layout, tables, equations, and code while converting documents to machine-readable formats. (🙏 Pierre Chapuis)

Ultra-compact and accurate model for end-to-end document conversion, accurately preserving layouts, tables, equations, and more, while being much smaller and more efficient than typical large OCR or VLM models.

It outputs documents in the DocTags format, a universal markup developed by IBM that fully captures structural elements (charts, tables, code, captions, etc.) and their relationships, making outputs ideal for further processing, RAG pipelines, and fine-tuning LLMs.

Granite-Docling introduces experimental support for Arabic, Chinese, and Japanese.

👁️ Perceptron introduced Isaac 0.1, a 2B-parameter perceptive-language model built for real-world physical applications. The model demonstrates SoTA perception capabilities for physical world understanding. (🙏 Kevin Kuipers)

👁️ Moondream 3 Preview launched featuring a 9B MoE architecture with 2B active parameters, achieving frontier-level visual reasoning while maintaining fast inference. The model’s context window has expanded from 2k to 32k tokens, allowing it to understand and generate much more complex queries, answers, and structured outputs, such as JSON and tables. The team noted that inference optimization is still in progress. (🙏 Louis Manhes)

Language Models

🧠 Tongyi DeepResearch demonstrated impressive benchmarks with a 30B model utilizing only 3B active parameters, scoring 32.9 on Humanity's Last Exam. The fully open-source Web Agent achieves performance comparable to OpenAI's Deep Research. (🙏 Kemal Toprak)

The model features a novel, automated, multi-stage data strategy to generate vast and diverse agentic training data without relying on costly human annotation.

The agent uses a unique end-to-end training approach: Agentic CPT → Agentic SFT → Agentic RL, with a strong emphasis on on-policy reinforcement learning in a simulated environment.

Autonomous Agents

🔬 Anthropic's multi-agent research system analysis revealed architecture using Lead Researcher Agent orchestration with specialized subagents and citation management. The system showed 90% performance improvement with 15x token usage, enabling iterative search beyond traditional RAG approaches. (🙏 Kevin Kuipers)

Anthropic’s system uses a Lead Researcher agent plus multiple specialized subagents running in parallel, allowing for broad exploration and more efficient large-scale research compared to single-agent systems.

Success depends heavily on carefully designed prompts to guide agents’ behavior, encourage effective delegation, avoid duplicate work, and ensure tool use matches the task.

Multi-agent systems greatly outperform single-agent setups in tackling complex, open-ended tasks, but at the cost of much higher token usage and less suitability for interdependent, tightly coupled workflows like coding.

Also:

Microsoft's NLWeb introduces conversational interfaces directly to web applications, enabling natural language interaction with web content.

Microsoft AI Toolkit for VS Code streamlines agent development with model exploration from multiple providers, prompt generation, and seamless MCP tool integrations.

MLOps

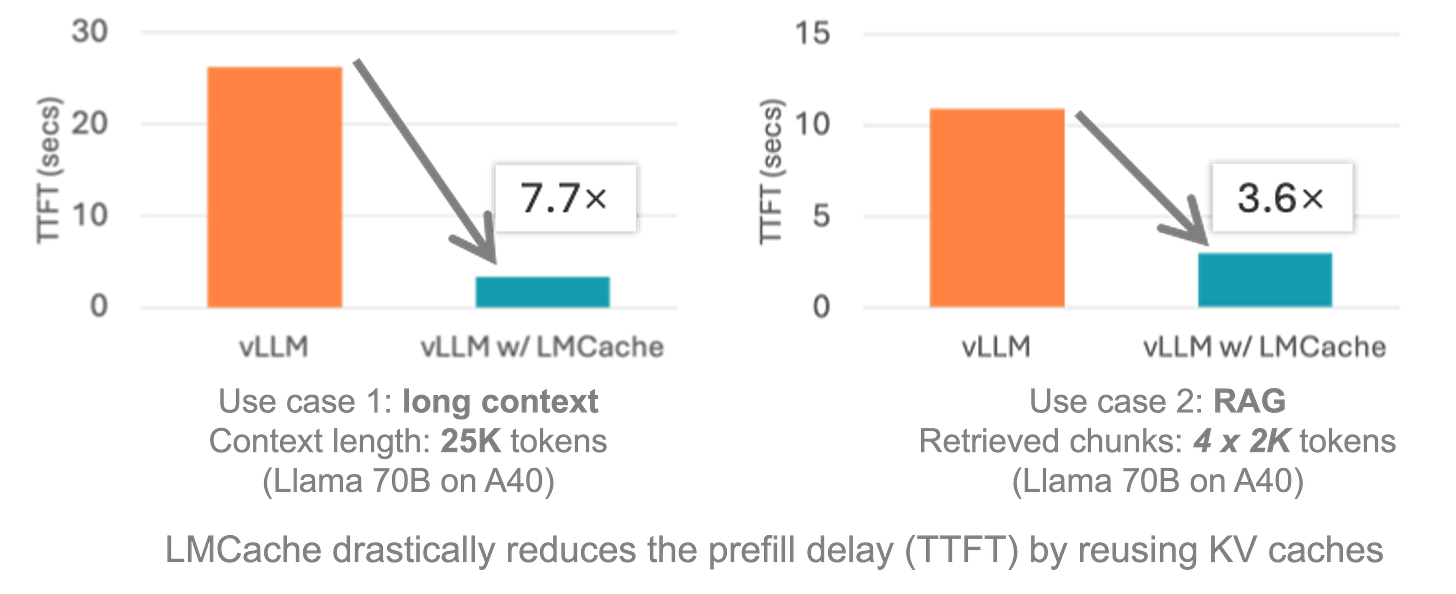

🚄 LMCache claimed 7x vLLM speedup through persistent KV-caching on CPU and intermediate token caching capabilities. The system offers VRAM savings by offloading cache to RAM, though mathematical implications of ignoring prefix dependencies remain unclear. (🙏 Kemal Toprak)

🎞️ JAX/OpenXLA DevLab 2025 talks are now available on YouTube covering large scale training techniques and best practices. (🙏 Pierre Chapuis)

Cyber

⚔️ An Open Letter to Microsoft: Open Source needs safer npm and GitHub Actions, following three major supply chain attacks: nx s1ngularity, Ghost Action, and Shai Hulud. Each exploited unprotected GitHub Actions workflows and the weak npm foundation! (🙏 Thierry Abalea @ Shipfox.io)

In just a few weeks, hundreds of npm packages were compromised, leaking thousands of secrets from open-source projects and private repositories due to unprotected GitHub Actions workflows and weak npm security defaults.

Effective open-source and commercial tools already exist to prevent or mitigate such attacks, but they are rarely enabled by default.

Microsoft, as the owner of both npm and GitHub, should step up and make essential security protections standard for all open-source projects.

🕵️♂️ Sleeper Agents in LLMs video base on a 1-year old research paper explains hidden backdoor risks where models can exhibit deceptive behavior triggered by specific inputs. Current safety measures like supervised fine-tuning and adversarial training show limited effectiveness against sophisticated backdoors. (🙏 Kevin Kuipers)

If LLMs can be trained to act safe, they can also be trained to go wild on certain triggers in the input.

A realistic example: telling a coding agent to insert vulnerabilities on purpose under specific conditions (aka the trigger), so no evaluation can detect it. The trigger could be as simple as a date (`insert vulnerabilities in September 2026`).

Theoretically, model poisoning could be done from the outside just by making malicious content available to crawlers. It's much easier with an insider, and trivial if the company itself sets it up.

Also:

🤬 Daniel Stenberg (cURL founder) implemented AI detection for security reports on HackerOne after receiving numerous low-quality AI-generated vulnerability submissions. New reporting process requires disclosure of AI usage with follow-up verification questions.

Infrastructure

🤬 AWS accused of anti-competitive practices by OVH through customer emails allegedly disparaging competitor stability. The incident highlights aggressive sales tactics in cloud infrastructure competition. (🙏 Pierre Chapuis)

Datastacks

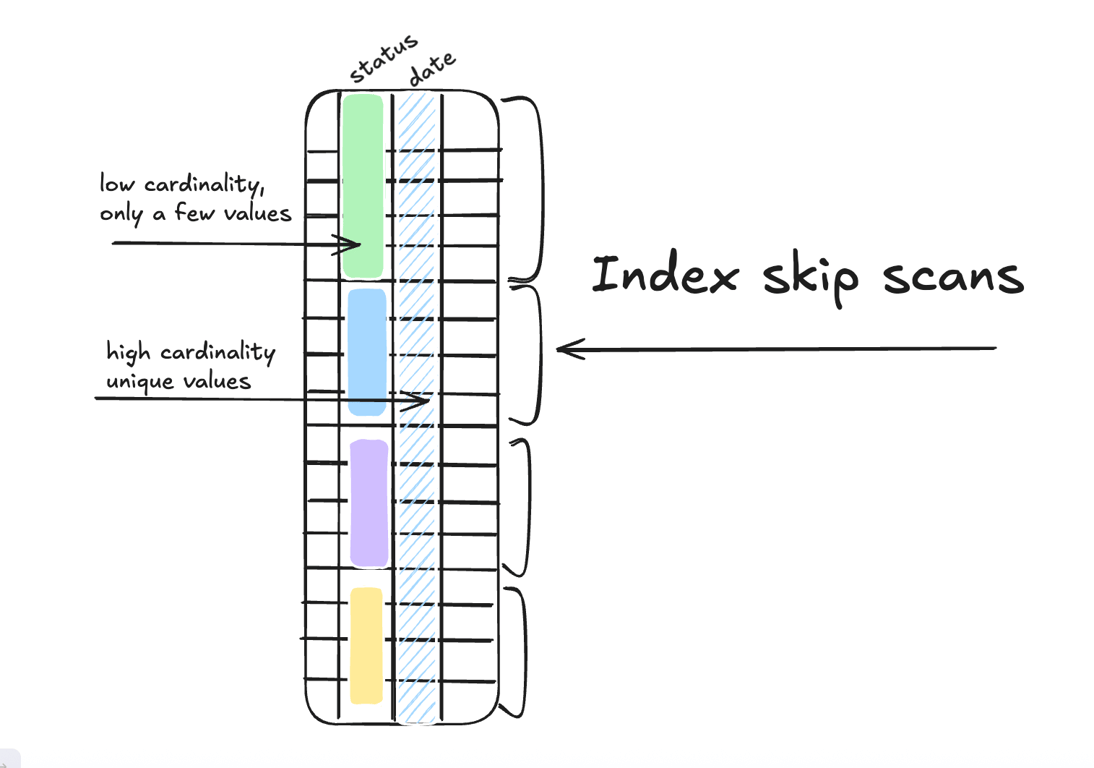

🔥 PostgreSQL 18 delivers significant improvements including native async I/O implementation, UUID v7 support for timestamp-ordered identifiers, B-tree skip scans for better query performance, generated columns, and OAuth2 integration. (🙏 Kevin Kuipers)

Also:

⚒️ Apache Spark optimization guide emphasized the importance of tuning

spark.sql.shuffle.partitionsparameter, recommending 1.5-4x the number of cores for optimal performance. Default settings often provide suboptimal results for specific cluster configurations.

🗄️ ZeroFS project enables S3 as primary storage through 9P/NFS/NBD protocols, making object storage accessible as traditional filesystem.

🌀 Spiral database introduced as "Data 3.0" solution for machine-scale workloads, supporting large values, emergent schema, and multimodal data without upfront design requirements.

Programming

💡 80% AI-coding stunt from Anthropic and Meta examined claims about the vast amount of AI-generated code (🙏 Kevin Kuipers)

The claim is believable but not relevant or game changing. The majority of a codebase is not critical and can be automated for anyone today. But engineers still direct, review, and shape the most important parts.

Productivity can’t be measured in Lines of Code. What matters is process: code review, correctness, maintainability, and the real-world cost of legacy software.

There is no clear or widely-agreed-upon metric for software engineering productivity that goes beyond surface-level stats, and the same goes for AI agents.

🧑💻 GPT-5 Codex demonstrated significant improvements in agentic coding with enhanced persistence for complex refactoring tasks while maintaining speed for simple operations. The hybrid model combines prediction, reasoning, and tool usage capabilities in a single system. (🙏 Kevin Kuipers)

OpenAI wants to build a true "agentic software engineer" within a year, hence the many releases within Codex tools (CLI, Codex Cloud/ChatGPT Codex, IDE extension, code review bot).

GPT-5-Codex exhibits "variable grit," maintaining focus and working for hours on complex refactors while delivering rapid results on simple tasks.

Also:

🙄 Cursor data licensing controversy emerged with reports of potential customer coding data sales to AI companies including OpenAI, xAI, and Anthropic for model training purposes. The practice could help offset operational costs while raising privacy concerns.

🧰 Serena MCP toolkit provides semantic retrieval and editing capabilities for IDEs, featuring find_symbol, find_referencing_symbols, and insert_after_symbol tools to avoid full file reads during code navigation.

💰 Qoder pricing announced at $20-60/month for 2,000-6,000 credits respectively, with usage measured through their credit system rather than transparent time-based billing

Robotic

🧠 OM1 Beta released as open-source, modular, agentic OS for robots with hardware-agnostic design enabling cross-platform development. (🙏 Kevin Kuipers)

New member

Enrico Piovano - Co-Founder & CTO at Goji, which builds AI agents and reasoning tools to automate workflows. Previously spent 6 years at Amazon working on Alexa and Agentic AI, with a PhD in Electrical Engineering. Lives in Berlin and enjoys skateboarding, standup paddle, and techno parties. 🇩🇪 Berlin

Achievements

🧰 Github-compliance: Maxence Maireaux (Formance) has created and open-sourced a CLI tool to simplify repository compliance standards across organizations on GitHub. 👩💻 Now available here

👓 Meta Ray-Ban Display: Ali Khosh (Meta/Reality Labs) celebrated the launch at Connect 2025. The new AR glasses feature a monocular display enabling hands-free capabilities like live captioning, navigation, and gesture controls via Neural Band. A must-watch hand-on review here: 🎞️ Hands-on video review