TTY-changelog #042

GPT-Realtime-2 brings GPT-5 to voice, a Science study puts LLMs ahead of physicians, cheaper trials could reshape biotech, agent code reframes review, and the community maps second brains.

👉 Originally posted on TTY

Community Discussion

🧠 How we build our second brains – A week-long thread initiated by Paul Masurel mapped the spectrum of personal knowledge management in the community, from bare-minimum setups to fully custom cognitive infrastructure. Julien Millet’s sharpest observation set the tone: before picking any tool, define what you actually want the system to do. Storing, navigating, retrieving, generating, and tying to ongoing projects each lead to radically different architectures. “If you only design for storage, you’ll end up with a graveyard of notes you never reread.”

Approaches covered the full range. Karim Matrah keeps it minimal with Google NotebookLM, querying it from Claude Desktop via MCP. Benoit Kohler’s team moved away from individual Obsidian setups after everyone kept duplicating work in parallel, building shared custom skills on top of Karpathy’s paper instead. Jocelyn Fournier solved the scoping problem by creating domains, attaching memories to specific topics rather than one flat pile. Marie Thuret went furthest: a Karpathy-style LLM wiki extended with a cognitive profile covering how she thinks, learns, and decides, her beliefs, her tensions, her working assumptions, plus a map of the people around her. The system doesn’t just store what happened, it knows who was involved and what that means. A generic assistant treats all inputs the same. Marie’s doesn’t. “When I start Claude Code without the plugin on, I feel it immediately. But it’s no magic. Lots of maintenance and curation to keep the quality high, and you have to fight default LLM behaviors along the way.”

The hardest unsolved problem: connecting heterogeneous sources. Paul Masurel described constantly searching Slack, Gmail, and Google Docs for information he already has somewhere, and suspects it is now possible to build something that addresses all of it in one system. Julien Millet put it more bluntly: juggling four platforms for a weekly review is “a clusterf*ck,” and whoever builds the universal cross-source connector “might have gold in their hands.”

Events

🇳🇱 Startup World Cup in Amsterdam (June 10) – Co-hosted by Tech Makers and Prosus at the AI House. Benelux founders, investors, and operators compete for a spot at the San Francisco final and a $1M investment prize.

Audio

🎙 GPT-Realtime-2 voice suite – OpenAI launched a suite of real-time voice models that bring GPT-5-class reasoning to production APIs for the first time, shifting voice interfaces from simple turn-taking toward agents that can listen, reason, translate, and act as conversations unfold.

GPT-Realtime-2 supports tool use, interruption handling, and multi-turn context, moving voice agents from call-and-response toward agents that reason through requests and complete tasks mid-conversation.

GPT-Realtime-Translate streams live translation across 70+ input languages into 13 output languages, keeping pace with the speaker without post-processing delay.

GPT-Realtime-Whisper transcribes live as the speaker talks rather than after they finish, enabling downstream processing to begin before a turn ends.

Autonomous Agents

🤖 HubSpot’s open agent platform – HubSpot positioned itself as a CRM “open by design” for the agent era, arguing the real AI race is about context rather than models. The vision: any agent can plug into HubSpot’s data and intelligence as a building block, while agents can also operate the platform end-to-end autonomously.

Biotech, Health, and Chemistry

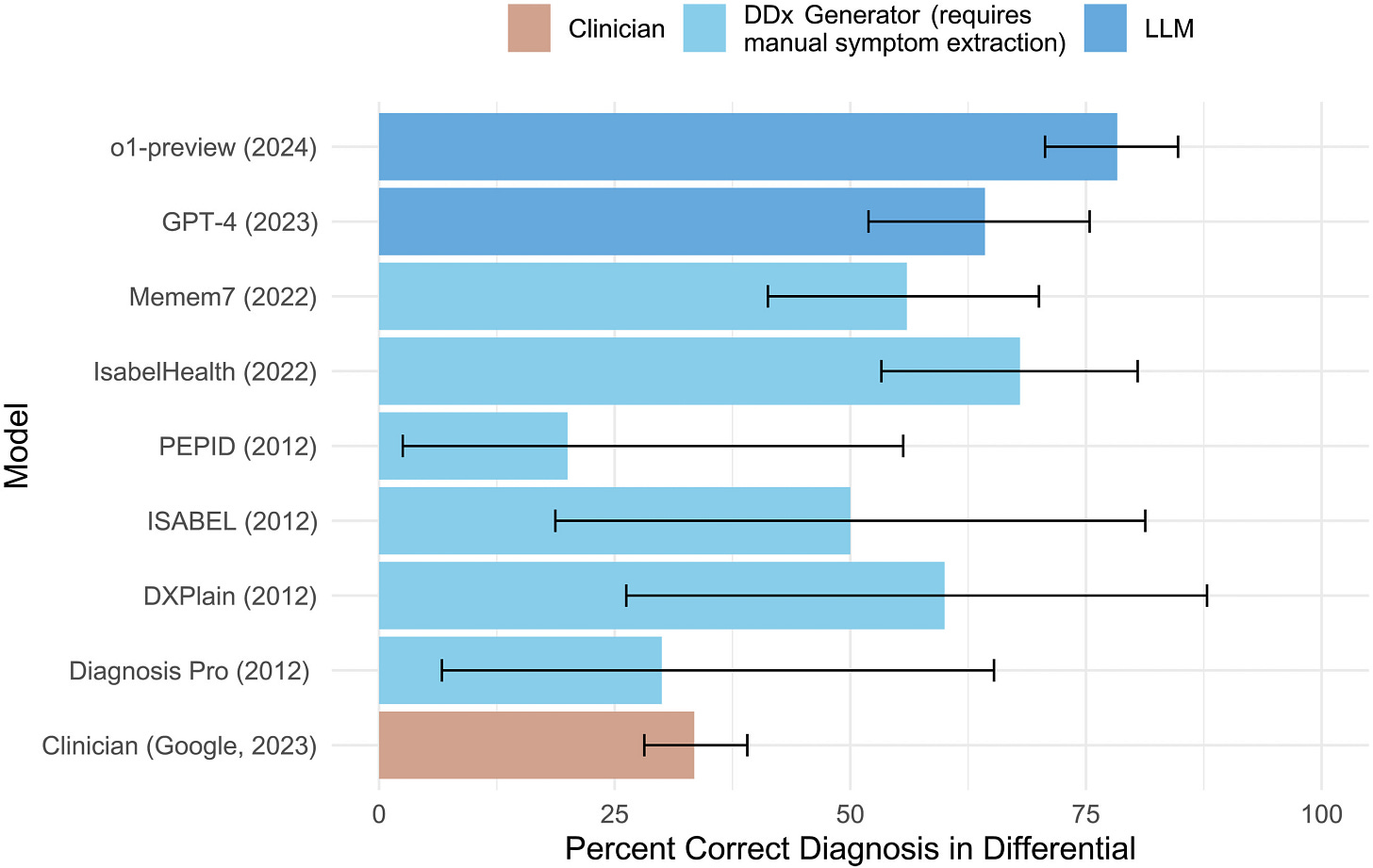

🩺 LLM outperforms physicians across settings – Published in Science, a study tested OpenAI o1 against hundreds of physicians on clinical cases from published vignettes to live ER patients. The model outperformed human baselines in both diagnosis and management planning across all five experiments, motivating calls for prospective trials

👨⚕️ DeepMind’s AI co-clinician initiative – Published the same week as the Science paper above, DeepMind launched a research initiative into triadic care, where AI agents assist patients under physician authority. Evaluation used standardized patients, the same method as medical school exams, where the system outperformed leading evidence synthesis tools in blind comparisons.

Community take w/ Félix Raimundo: “Standardized patients are exactly how medical students are trained and evaluated, and far more representative of actual care than an EHR (electronic health records) that already contains all the information.”

🧬 MULTI-evolve’s epistasis claim challenged – A University of Washington analysis found that Arc Institute’s MULTI-evolve, published in Science as learning epistatic interactions between mutations, actually learned a classical additive model. The original paper included no comparison against simple additive baselines, a standard check for this type of claim.

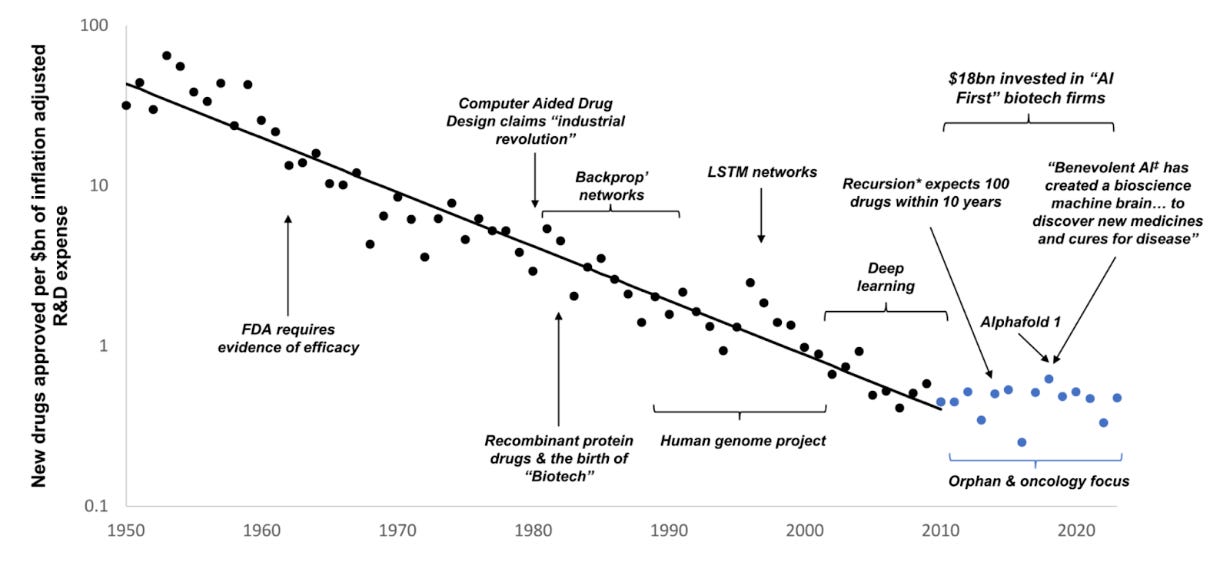

💊 Can AI beat Eroom’s Law – Drug development costs have doubled roughly every 9 years since 1950 despite AI advances, a trend called Eroom’s Law. An investor essay argues cheaper AI-assisted trials could let small biotechs run Phase 3 without early exits to pharma, though managing a trial requires deep hospital and KOL networks that most biotechs lack.

Community take w/ Félix Raimundo: “Right now, biotechs get their liquidity from either M&A or out-licensing because Phase 3 trials are too capital intensive, and they need to hand it off to pharma. His point is that if trials become cheap enough, biotechs may be able to run these themselves without being forced into early exits. I have some light reservations though: the issue is not just cost, managing a trial requires a very deep network of hospitals and KOLs which biotechs may not have, and being efficient at running these also saves patent lifetime. But the point is still very interesting.”

🔬 Biology’s fuzzy API problem – Unlike software, drug discovery has no clean typed interfaces. Target discovery produces a probabilistic hypothesis, drug design produces a candidate burdened with unresolved questions, and clinical trial results are filtered through patient selection, endpoints, and regulatory interpretation. Every handoff leaks assumptions.

💰 Isomorphic Labs nears $2B raise – Isomorphic Labs, the AlphaFold spinout built to turn protein structure prediction into approved drugs, is raising over $2B after pushing its first clinical trials from 2025 to end of 2026. The raise funds the gap between AI drug design capability and clinical validation, with Eli Lilly, Novartis, and Johnson & Johnson already as partners.

Image, Video & 3D

🎬 VES on-set VFX data guide – The Visual Effects Society Technology Committee published an interactive guide on on-set data types, capture workflows, and stakeholder responsibilities across the production pipeline, aimed at standardizing communication between VFX, production, and technology teams.

Data, Infrastructure

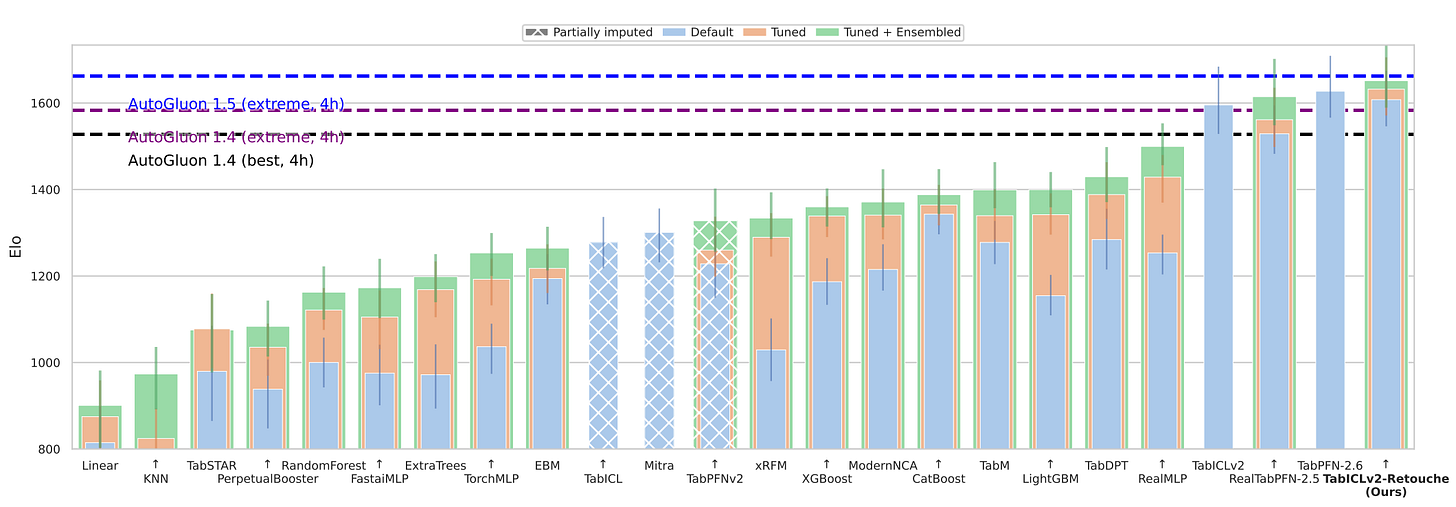

📊 Lightweight adapter for tabular models – Co-written by Duong Nguyen from the community, TFM-Retouche is an architecture-agnostic input-space residual adapter for tabular foundation models that improves zero-shot performance without modifying backbone weights or requiring architecture-specific PEFT methods. It achieved this ranking under 20x less hyperparameter tuning budget than every competing tuned method on the benchmark.

⚙️ Inference engines as hardware strategy – A breakdown of major LLM inference engines framed around hardware strategy: llama.cpp for portability, MLX for Apple Silicon, vLLM for production serving, SGLang for large-scale disaggregation, and TensorRT-LLM for maximum NVIDIA throughput. The engine follows from the hardware decision, not the other way around.

Language Models

🧮 AlphaEvolve scales beyond math – DeepMind detailed AlphaEvolve’s real-world applications beyond mathematics: a 30% reduction in DNA sequencing errors via DeepConsensus optimization, grid improvements raising AC power flow feasibility from 14% to over 88%, and a 5% accuracy boost in natural disaster prediction across 20 categories.

💡 SubQ claims subquadratic frontier model – Subquadratic introduced SubQ 1M-Preview, claiming it is the first LLM on a fully subquadratic architecture with a 12M-token context window and roughly 1,000x lower attention compute than standard transformers. Community skepticism was high given the company’s thin public research footprint.

Programming

🔧 Treat agent output like compiler output – Great piece by Hugo Venturini from the community in response to Philip Su’s “No More Code Reviews: Lights-Out Codebases Ahead”, the piece reframes the real question: not whether to trust agent output, but whether we have built the upstream apparatus (type systems, formal specs) and downstream verification (tests, monitoring) that make reviewing unnecessary. We do not review compiled binaries. We run them against test suites.

Community take w/ Lior Oren: “Working without human review has delivered faster, higher-quality results when paired with spec-driven development, agent-led review loops, and E2E tests that check goals rather than steps.”

📐 Category theory for tiny ML – A working draft teaching ML fundamentals from first principles using Rust types, typed transformations, composition, and training loops. Category theory serves as an engineering tool rather than abstraction, making the structure of deep learning systems explicitly executable.

🦀 Bun explores Zig-to-Rust port – Bun is experimenting with rewriting its codebase from Zig to Rust. Notably, they wrote migration docs specifically for AI agents, not humans, telling AI to translate logic first without compiling, then fix compilation later. Still experimental, not decided.

New Member

🇫🇷 Raymond Rutjes – Twenty years in software, the last ten at Algolia (40 to 800 employees). Previously ran a web agency and built Pimwi, a SaaS that reached around 4,000 users. Now in exploration mode, looking for the right problem to commit to. Special power: My best ideas show up around kilometer 8. Resisting the urge to build my own indie game (otherwise known as the only legal way to force my self-produced music on strangers). 📍 Lyon, France

Contributors This Week

Félix Raimundo, Gabriel Olympie, Hugo Venturini, Julien Millet, Youssef Tharwat, Anicet Nougaret, Benoit Kohler, Guillaume Lesur, Jocelyn Fournier, Julien Seveno-Piltant, Marie Thuret, Duong Nguyen, Alvaro Lamarche Toloza, Karim Matrah, Paul Masurel, Robert Hommes, Jeremie Kalfon, Lior Oren, Quentin Dubois, Raymond Rutjes