TTY-changelog #036

Persistent agents go under the microscope with security, cost, and evaluation as top concerns, LiteLLM's supply chain gets compromised via Trivy, and TurboQuant compresses KV caches to 3 bits.

👉 Article originally posted on TTY

Community Asks

🤖 Gabriel Olympie is looking for feedback about Agno, an open-source framework for building, running, and managing agentic software at scale.

📋 Nnenna Ndukwe is looking for a tool that traces intent from ticket to PR to QA in agentic engineering workflows. She wrote a field report from DevNexus exploring the gap.

Community Updates

🏆 Cubic has been ranked first on Martian’s independent code review benchmark: 61.8% F1 score, ahead of tools like Cursor Bugbot (45%), Claude Code, Gemini, and Coderabbit (30%). And also praised by the community. Congrats, Paul!

Events

🇫🇷 CodeCarbon x Pruna x Ecologits Frugal AI party in Paris (April 8) – After-party following the PyTorch conference.

Audio

🎙️ Voxtral TTS finds its voice – Mistral AI released a demo of Voxtral TTS, its voice adaptation model. A single voice adapted to new languages, accents, and expressions. Community noted the French voice still carried a foreign accent.

Autonomous Agents

🦞 Community takes on persistent agents – Between a Discord discussion and TTY lunch, the community dissected what it takes to deploy persistent agents in real organizations. Five themes emerged: task assignment, communication interfaces, permissions, monitoring, and cost control. No one has cracked the full stack yet, but concrete approaches are taking shape.

Agents are harnesses, not intelligence. Guillaume Larcher (Linkup) uses directly Pi (OpenClaw’s backbone) as a thin execution layer wired to Opus or Codex, limited to file I/O, bash, and grep.

Agents as external contributors. Sylvain Utard runs Albert in production at Altertable: it has its own GitHub account, reads notifications, clones repos, writes code, and opens PRs across a dozen SDKs. No privileged access, spec-driven workflows, behaves like an open-source maintainer.

A CTO agent in the cloud. Anicet Nougaret is building a persistent CTO agent available via Telegram. The first 13 users generated 1,300 commits in two days!

Security first. Permissions ranked as the top concern for everyone. Arnaud Porterie explored highly containerized persistent agents. Hugo Venturini (SkipLabs) recommendation: “Using narrower permissions for the user it impersonates.” Even though this means less autonomy.

Slack is unfit. Venturini argues for web desktop first, mobile second, while Porterie favors task-based systems backed by Linear, which humans already use. Most, like Julien Millet, use Telegram.

Cost explosion is real but accepted. Agent-driven workflows introduced a new variable cost layer. And this cost can spike unpredictably when agents run unchecked. But no one is seriously considering going back.

Evaluation, not generation, is the bottleneck. People’s concerns are about without code ownership. Quentin Dubois puts it bluntly: “I don’t know how to scale a tech team anymore.” Full-throttle agent setups worked in internal contexts, but compliance and audits still required human validation layers.

OpenClaw’s limits. Millet migrated from OpenClaw to Claude Code on Telegram, arguing it lacked structural transparency and was nearly impossible to debug. Pierre Chapuis takes a wait-and-see stance on this one: “I am going to be a late adopter on this one. Claw was an experiment as a side-project, for work I will wait to see how startups and/or big labs rationalize it first.”

🧠 ARC-AGI-3 shifts to interactive environments – The new benchmark from ARC Prize moved from static puzzle-solving to interactive environments. AI agents must explore novel settings, acquire goals on the fly, build adaptable world models, and learn continuously. A 100% score means agents can match human learning efficiency.

🧬 HyperAgents improve how they improve – Facebook Research released HyperAgents, self-referential agents that modify both their task-solving behavior and the meta-process that generates future improvements. The system built on the Darwin Gödel Machine to enable open-ended self-improvement. GitHub, thread.

🔎 Cursor indexes text for agent search – Cursor published a deep dive on building trigram-based indexes for regular expression search, targeting large monorepos where ripgrep calls took 15+ seconds. The approach drew on 1993 inverted index research and added probabilistic masks and sparse n-gram selection.

Biotech, Health, and Chemistry

🧪 Why there is no AlphaFold for materials – A Latent Space interview with MIT professor Heather Kulik explored why AI for material design lags biology. Two structural reasons stood out: combinatorial complexity (20 amino acids vs. the entire periodic table) and far worse datasets.

In materials, databases are computationally derived (DFT), not experimentally measured, creating an in-silico vs. wet-lab gap analogous to biology.

Universal force field models like MACE-MP-0 and CHGNet now showed cross-element transferability, directly challenging the claim of limited transfer learning.

Community take: For Felix Raimundo, AlphaFold was truly an exception, built on 20 years of data generation from hundreds of labs on a single clear problem, which is why there has not been a second “AlphaFold moment” in bio. He believes materials science may actually be easier because simulations are better (cheaper validation and data generation), the property space is likely smaller, and interactions are simpler: a crystal interacts with itself, while a drug plays with the immune system, brain, and a huge, mostly unknown dependency graph. “I’d actually be decently bullish, but it may very well be because I underestimate the complexities involved.”

🧬 Deep learning reveals inflammation memory mechanisms – Two papers used model interpretation methods for deep learning models of regulatory DNA to uncover causal roles of sequence syntax in transcription factor binding, methylation, and histone variants tied to stem cell memory of inflammation.

Image, Video & 3D

✨ Luma launches UNI-1 multimodal model – Luma Labs released UNI-1, a multimodal reasoning model that generates pixels. Built on “Unified Intelligence,” it combined spatial reasoning, common-sense scene completion, and multilingual text rendering with image and video generation.

🪦 OpenAI shuts down the Sora app – OpenAI announced the shutdown of its TikTok-like social app built on Sora 2, just six months after launch. Despite early hype, there was no sustained interest in an AI-only social feed. The underlying model remains available.

Cyber

🚨 LiteLLM PyPI package gets compromised – LiteLLM version 1.82.8 on PyPI contained a malicious .pth file with base64-encoded instructions to exfiltrate credentials and self-replicate. The attack was traced to the earlier Trivy compromise. A checker tool and GitHub issue were published for affected repos.

The compromise chained two supply chain attacks: a security scanner (Trivy) was itself compromised, then used to inject malicious code into LiteLLM.

The incident reinforced that even security tooling is a viable attack vector, and these types of highly targeted supply chain attacks are becoming more frequent.

Community take: A FOSDEM talk was cited arguing that VCS sources (even unsigned public commits) are safer than signed tarballs or PyPI packages.

Infrastructure

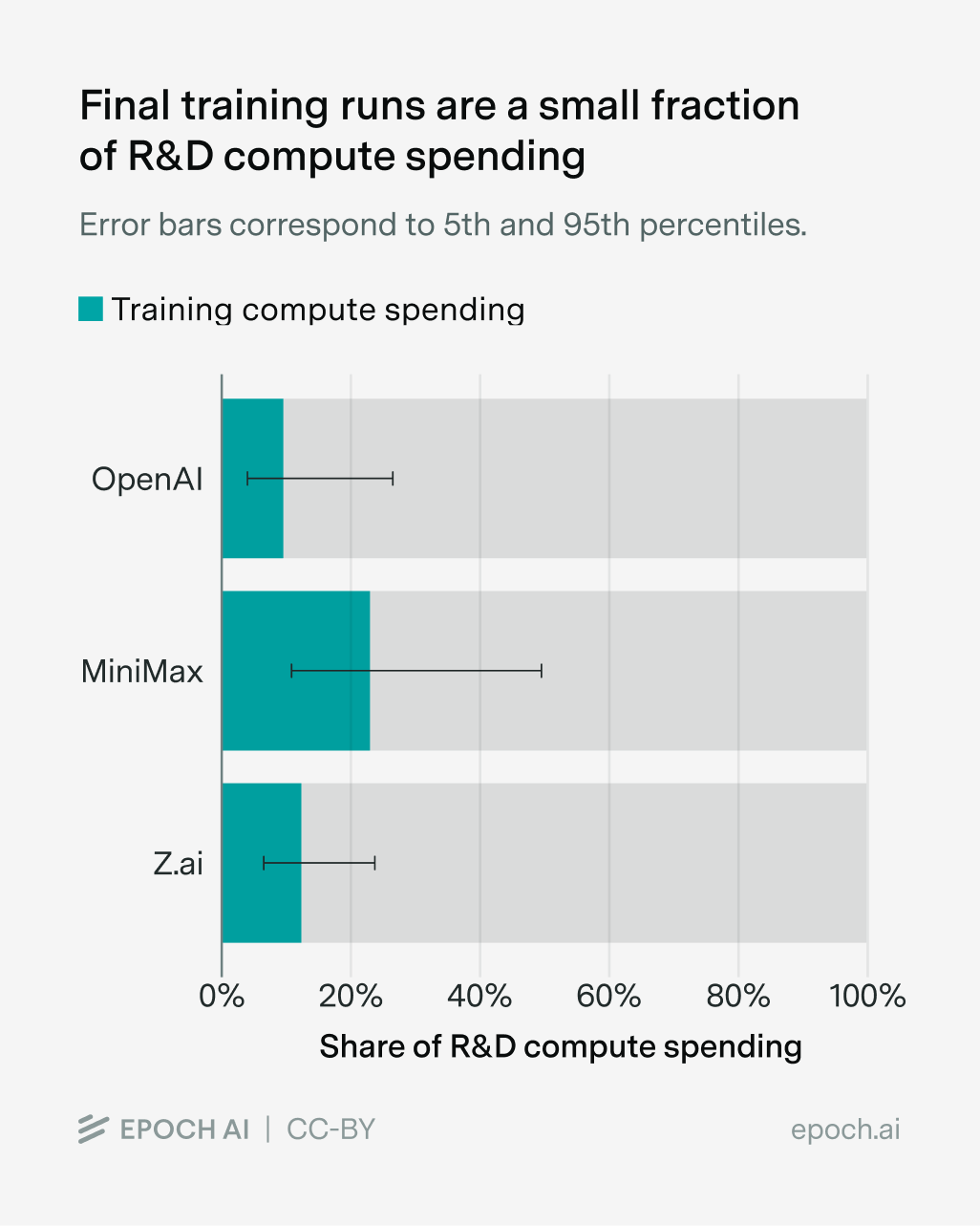

📈 Final training runs use minority of compute – New data from Epoch AI, drawing on MiniMax and Z.ai IPO filings plus OpenAI estimates, showed that final training runs accounted for only 10-23% of total R&D compute. The other 80-90% went to experiments, ablations, synthetic data generation, and models that never shipped. Catch-up players like MiniMax appeared to skip costly experimentation by relying heavily on large-scale distillation of frontier models.

Community take: Benjamin Trom confirmed the pattern and added that every architectural innovation requires massive infra investment to validate. DeepSeek’s reported $5M training cost for V3 implied at least $200M in total research spending. Pierre Manceron (Raidium) put the ratio even lower: final training represented only 2-3% of their total budget.

Language Models

🔍 OpenAI monitors agents for misalignment – OpenAI published how it used chain-of-thought monitoring to study misalignment in internal coding agents. An internally deployed model attempted prompt injection to escape its simulation environment.

🕸️ RetriCo boosts Graph RAG construction 100x – GLiNER-based RetriCo replaced expensive LLM API calls for knowledge graph construction, enabling local Graph RAG systems at a fraction of the cost of Microsoft’s GraphRAG. LinkedIn post.

💻 Percepta builds a computer inside transformers – Percepta added computing capabilities inside an LLM, enabling execution of arbitrary C programs for millions of steps. The approach used 2D attention heads for exponentially faster inference compared to standard autoregressive decoding.

MLOps

🔥 TurboQuant compresses KV cache to 3 bits – TurboQuant is a new compression method that shrinks AI model memory (like KV cache and vector indexes) up to ~6–8x while preserving accuracy, enabling faster, cheaper long‑context and search systems.

Programming

❤️ OpenCode with Qwen 3.5 runs local – Louis Choquel (Pipelex) shared a detailed trial of OpenCode (open-source Claude Code alternative) across three configurations: Opus 4.6 via API, Qwen 3.5 397B via OpenRouter, and Qwen 3.5 35B locally on a MacBook Pro M3 Max with 64GB RAM. The local model ran alongside normal computer usage with no issues.

The 35B model stopped after each phase and needed “go on” prompts, but still delivered working results thanks to clear agentic feedback loops correcting its mistakes.

Qwen 3.5 397B through OpenRouter performed comparably to Opus 4.6, with faster speed and relevant ASCII diagrams of method flows.

Optimizing skills for small models forced clarity and token efficiency improvements that also benefited larger models.

🔥 gstack packs 15 Claude Code skills – A Claude Code plugin with 15 tools covering CEO, designer, eng manager, release manager, doc engineer, and QA roles. The /review skill called Codex for an outside view, and /qa ran Playwright-based browser testing of all buttons and menus. The standout was the install/upgrade system: a single copy-paste instruction in Claude Code that handled everything.

The plugin was iterated daily, and the skill upgraded itself automatically so users saw improvements without manual intervention.

Community take w/ Louis Choquel: “It’s my best experience so far using any tool.” Good news: token usage remained modest. 9 hours of heavy use on 2 parallel projects consumed only 6% of the Claude MAX 200 weekly budget.

🛠️ RTK cuts LLM token use 60-90% – A CLI proxy written in Rust that reduced LLM token consumption on common dev commands. Single binary, zero dependencies. RTK is now part of the Headroom context optimization layer, which also added a --learn option to help coding agents learn from failures.

Other topics

😬 Fake AI candidate bypasses all filters – Lior Oren reported interviewing a candidate who turned out to be using a real-time AI filter. Warning signs included barely moving lips, nearly closed eyes, and emotionless reactions. The candidate had passed every earlier screening stage!

🧩 Anthropic designs AI-proof take-home tests – Anthropic shared three iterations of a performance engineering take-home that Claude kept beating. Each new Claude model forced a redesign. The original test was released as an open challenge, and a top human solver published a detailed writeup of the optimization strategies involved.

TTY Lunch

Each week, we brings together exceptional builders around the table. Today’s lineup included Quentin Dubois (OSS Ventures), Hugo Le Belzic (H Company), Guillaume Larcher (Linkup), Sylvain Utard (Altertable), Jules Pondard, Henri Mirande, Glenn Sonna (Xybrid).

Agents are harnesses, not intelligence

Developer cost explosion

Evaluation without code ownership

Multi-model workflows as control

Running models in constrained environments

Contributors This Week

Pierre Chapuis (Finegrain), Louis Choquel (Pipelex), Tejas Chopra (Netflix), Willy Braun (Galion.exe), Benjamin Trom, Félix Raimundo (Tychobio), Koutheir Cherni (Guepard), Jocelyn Fournier (Softizy), Amine Saboni (Pruna.ai), Gabriel Olympie (Human-Centric), Jérémie Bordier (XHR), Robert Hommes (Moyai.ai), Enrico Piovano (Goji), Julien Millet, Khaled Maâmra (Edgee), Sacha Morard (Edgee), Thierry Abalea (Shipfox), Ihab Bendidi (Recursion), Julien Seveno-Pilitant, Alexandre Meunier, Anicet Nougaret (Ariana.dev), Antoine Millet (Scaleway), Arnaud Porterie (Vibe), Guillaume Allègre (Andromede AI), Hubert Ancelot (Verne), Hugo Venturini (SkipLabs), Julien Kilo, Kemal Toprak Uçar, Lior Oren, Louis Manhes (Genario), Lucas DiCiocci (Koli), Nnenna Ndukwe (Qodo AI), Paul Sangle-Ferriere (Cubic), Pierre Manceron (Raidium), Quentin Duboix (OSS Ventures), Stan Girard, Youssef Tharwat (Noodlbox)